Shannon entropy quantifies the average information in a message or random variable. It's a fundamental concept in information theory with applications in statistical mechanics and thermodynamics, measuring uncertainty or randomness in systems.

Developed by Claude Shannon in 1948, it provides a mathematical framework for analyzing information transmission and compression. In statistical mechanics, Shannon entropy connects information theory to thermodynamics, helping us understand the behavior of many-particle systems and phase transitions.

Definition of Shannon entropy

- Quantifies the average amount of information contained in a message or random variable

- Fundamental concept in information theory with applications in statistical mechanics and thermodynamics

- Measures the uncertainty or randomness in a system or probability distribution

Information theory context

- Developed by Claude Shannon in 1948 to address problems in communication systems

- Provides a mathematical framework for analyzing information transmission and compression

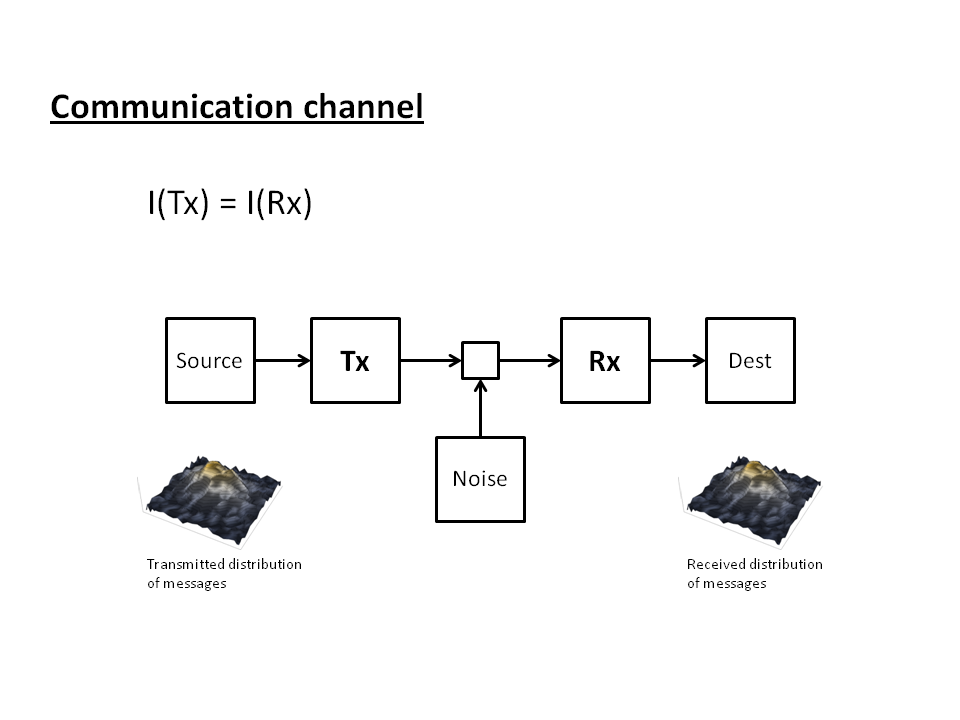

- Relates to the efficiency of data encoding and the capacity of communication channels

- Applies to various fields including computer science, cryptography, and data compression

Probabilistic interpretation

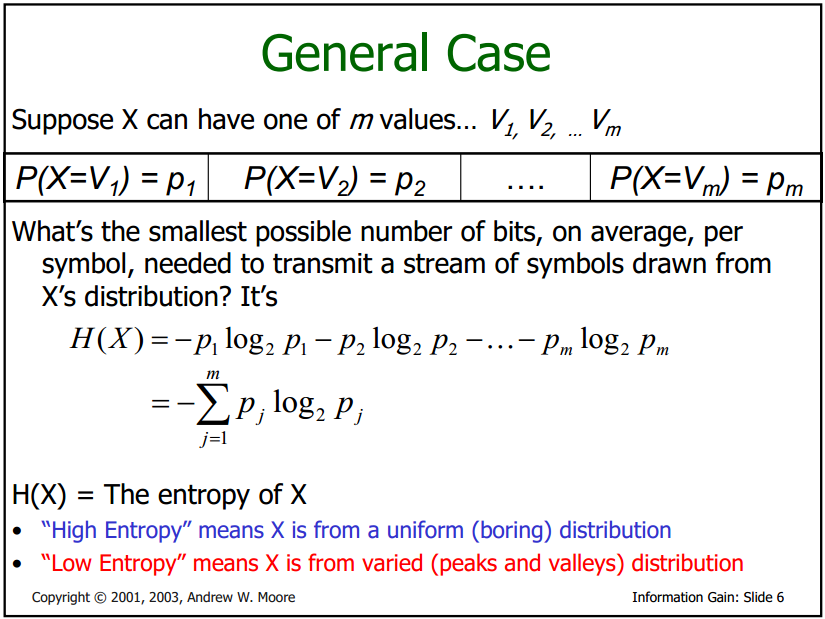

- Represents the expected value of information contained in a message

- Increases with the number of possible outcomes and their equiprobability

- Decreases as the probability distribution becomes more concentrated or deterministic

- Quantifies the average number of bits needed to represent a symbol in an optimal encoding scheme

Mathematical formulation

- Defined for a discrete random variable X with probability distribution p(x) as:

- Uses base-2 logarithm for measuring information in bits, but can use other bases (natural log for nats)

- Can be extended to continuous random variables using differential entropy

- Reaches its maximum value for a uniform probability distribution

Properties of Shannon entropy

- Provides a measure of uncertainty or randomness in a system

- Plays a crucial role in understanding the behavior of statistical mechanical systems

- Connects information theory to thermodynamics and statistical physics

Non-negativity

- Shannon entropy is always non-negative for any probability distribution

- Equals zero only for a deterministic system with a single possible outcome

- Reflects the fundamental principle that information cannot be negative

- Aligns with the concept of absolute zero in thermodynamics

Additivity

- Entropy of independent random variables is the sum of their individual entropies

- For joint probability distributions:

- Allows decomposition of complex systems into simpler components

- Facilitates analysis of composite systems in statistical mechanics

Concavity

- Shannon entropy is a concave function of the probability distribution

- Implies that mixing probability distributions increases entropy

- Mathematically expressed as:

- Relates to the stability and equilibrium properties of thermodynamic systems

Relationship to thermodynamics

- Establishes a deep connection between information theory and statistical mechanics

- Provides a framework for understanding the statistical basis of thermodynamic laws

- Allows for the interpretation of thermodynamic processes in terms of information

Boltzmann entropy vs Shannon entropy

- Boltzmann entropy: , where W is the number of microstates

- Shannon entropy: , where p_i are probabilities of microstates

- Boltzmann's constant k_B acts as a conversion factor between information and physical units

- Both entropies measure the degree of disorder or uncertainty in a system

Second law of thermodynamics

- States that the entropy of an isolated system never decreases over time

- Can be interpreted in terms of information loss or increase in uncertainty

- Relates to the arrow of time and irreversibility of macroscopic processes

- Connects to the maximum entropy principle in statistical mechanics

Applications in statistical mechanics

- Provides a fundamental tool for analyzing and predicting the behavior of many-particle systems

- Allows for the calculation of macroscopic properties from microscopic configurations

- Forms the basis for understanding phase transitions and critical phenomena

Ensemble theory

- Uses probability distributions to describe the possible microstates of a system

- Employs Shannon entropy to quantify the uncertainty in the ensemble

- Allows for the calculation of average properties and fluctuations

- Includes various types of ensembles (microcanonical, canonical, grand canonical)

Microcanonical ensemble

- Describes isolated systems with fixed energy, volume, and number of particles

- All accessible microstates are equally probable

- Entropy is directly related to the number of accessible microstates:

- Used to derive the fundamental postulate of statistical mechanics

Canonical ensemble

- Describes systems in thermal equilibrium with a heat bath

- Probability of a microstate depends on its energy:

- Entropy is related to the partition function Z and average energy:

- Allows for the calculation of thermodynamic properties like free energy and specific heat

Information content and uncertainty

- Provides a quantitative measure of the information gained from an observation

- Relates the concept of entropy to the predictability and compressibility of data

- Forms the basis for various data compression and coding techniques

Surprise value

- Quantifies the information content of a single event:

- Inversely related to the probability of the event occurring

- Measured in bits for base-2 logarithm, can use other units (nats, hartleys)

- Used in machine learning for feature selection and model evaluation

Average information content

- Equivalent to the Shannon entropy of the probability distribution

- Represents the expected value of the surprise over all possible outcomes

- Can be interpreted as the average number of yes/no questions needed to determine the outcome

- Used in data compression to determine the theoretical limit of lossless compression

Maximum entropy principle

- Provides a method for constructing probability distributions based on limited information

- Widely used in statistical inference, machine learning, and statistical mechanics

- Leads to the most unbiased probability distribution consistent with given constraints

Jaynes' formulation

- Proposed by Edwin Jaynes as a general method of statistical inference

- States that the least biased probability distribution maximizes the Shannon entropy

- Subject to constraints representing known information about the system

- Provides a link between information theory and Bayesian probability theory

Constraints and prior information

- Incorporate known information about the system as constraints on the probability distribution

- Can include moments of the distribution (mean, variance) or other expectation values

- Prior information can be included as a reference distribution in relative entropy minimization

- Leads to well-known distributions (uniform, exponential, Gaussian) for different constraints

Entropy in quantum mechanics

- Extends the concept of entropy to quantum systems

- Deals with the uncertainty inherent in quantum states and measurements

- Plays a crucial role in quantum information theory and quantum computing

Von Neumann entropy

- Quantum analog of the Shannon entropy for density matrices

- Defined as: , where ρ is the density matrix

- Reduces to Shannon entropy for classical probability distributions

- Used to quantify entanglement and quantum information in mixed states

Quantum vs classical entropy

- Quantum entropy can be zero for pure states, unlike classical Shannon entropy

- Exhibits non-classical features like subadditivity and strong subadditivity

- Leads to phenomena like entanglement entropy in quantum many-body systems

- Plays a crucial role in understanding black hole thermodynamics and holography

Computational methods

- Provides techniques for estimating and calculating entropy in practical applications

- Essential for analyzing complex systems and large datasets

- Enables the application of entropy concepts in data science and machine learning

Numerical calculation techniques

- Use discretization and binning for continuous probability distributions

- Employ Monte Carlo methods for high-dimensional integrals and sums

- Utilize fast Fourier transform (FFT) for efficient computation of convolutions

- Apply regularization techniques to handle limited data and avoid overfitting

Entropy estimation from data

- Includes methods like maximum likelihood estimation and Bayesian inference

- Uses techniques such as k-nearest neighbors and kernel density estimation

- Addresses challenges of bias and variance in finite sample sizes

- Applies to various fields including neuroscience, climate science, and finance

Entropy in complex systems

- Extends entropy concepts to systems with many interacting components

- Provides tools for analyzing emergent behavior and self-organization

- Applies to diverse fields including social networks, ecosystems, and urban systems

Network entropy

- Quantifies the complexity and information content of network structures

- Includes measures like degree distribution entropy and path entropy

- Used to analyze social networks, transportation systems, and biological networks

- Helps in understanding network resilience, efficiency, and evolution

Biological systems

- Applies entropy concepts to understand genetic diversity and evolution

- Uses entropy to analyze protein folding and molecular dynamics

- Employs maximum entropy models in neuroscience to study neural coding

- Investigates the role of entropy in ecosystem stability and biodiversity

Limitations and extensions

- Addresses shortcomings of Shannon entropy in certain contexts

- Provides generalized entropy measures for specific applications

- Extends entropy concepts to non-extensive and non-equilibrium systems

- Explores connections between different entropy formulations

Tsallis entropy

- Generalizes Shannon entropy for non-extensive systems

- Defined as: , where q is the entropic index

- Reduces to Shannon entropy in the limit q → 1

- Applied to systems with long-range interactions and power-law distributions

Rényi entropy

- Provides a family of entropy measures parameterized by α

- Defined as:

- Includes Shannon entropy (α → 1) and min-entropy (α → ∞) as special cases

- Used in multifractal analysis, quantum information theory, and cryptography