Containerization with Docker revolutionizes data science workflows by encapsulating entire environments. This ensures consistency across development, testing, and production stages, enhancing reproducibility of statistical analyses and facilitating collaboration among researchers.

Docker simplifies building, shipping, and running containerized applications for data science projects. It enables version control of complete analysis environments, streamlines sharing of setups, and ensures uniform execution conditions across different systems, boosting reproducibility and collaboration.

Introduction to containerization

- Containerization revolutionizes software deployment and management in Reproducible and Collaborative Statistical Data Science by encapsulating applications and dependencies

- Enables consistent environments across development, testing, and production stages, enhancing reproducibility of statistical analyses

- Facilitates collaboration among data scientists by ensuring uniform runtime environments regardless of underlying infrastructure

Concept of containers

- Self-contained units packaging application code, runtime, system tools, libraries, and settings

- Provides isolated execution environments sharing the host OS kernel

- Ensures consistency across different computing environments (development, testing, production)

- Lightweight alternative to traditional virtual machines

- Enables rapid deployment and scaling of applications

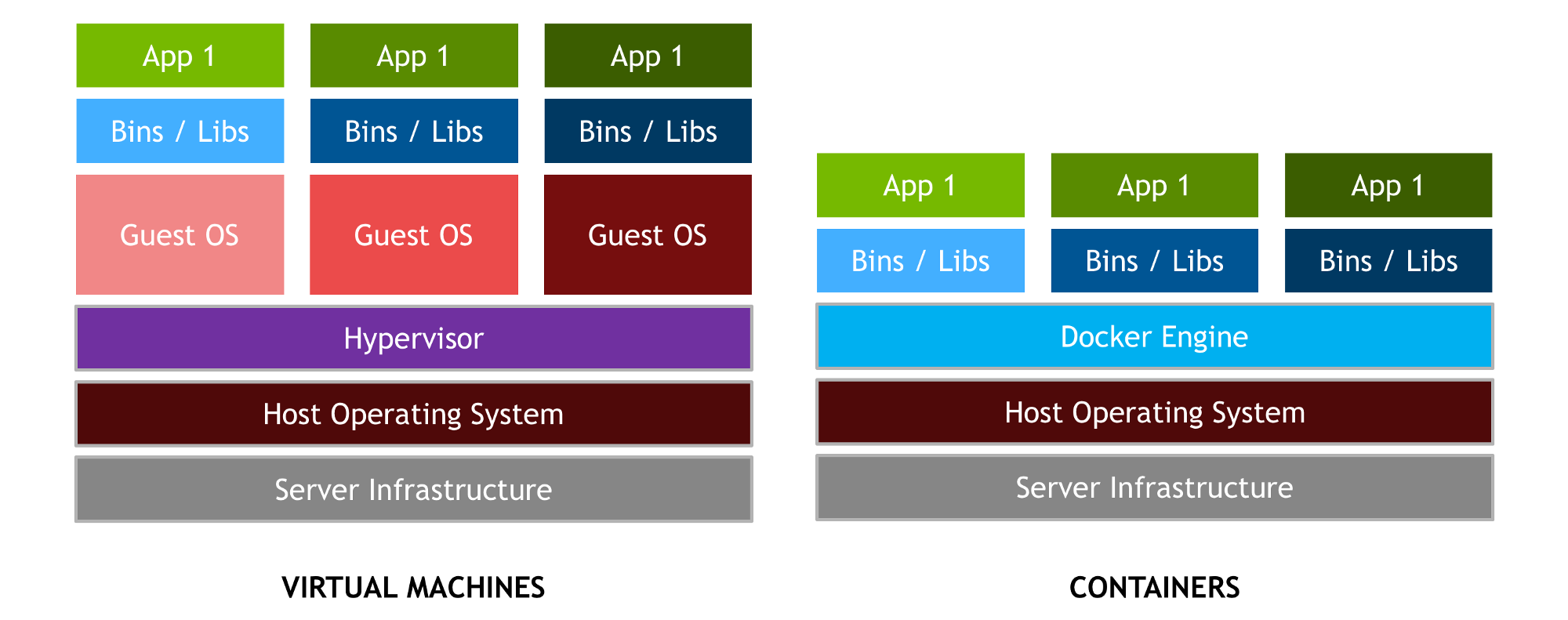

Containers vs virtual machines

- Containers share the host OS kernel, while VMs run on a hypervisor with separate OS instances

- Containers start up in seconds, VMs typically take minutes to boot

- Containers have a smaller footprint, usually megabytes compared to gigabytes for VMs

- VMs offer stronger isolation but at the cost of higher resource overhead

- Containers provide near-native performance with minimal impact on host system resources

Docker fundamentals

- Docker streamlines the process of building, shipping, and running containerized applications for data science projects

- Enhances reproducibility by encapsulating entire data analysis environments, including code, data, and dependencies

- Facilitates collaboration among researchers by ensuring consistent execution environments across different systems

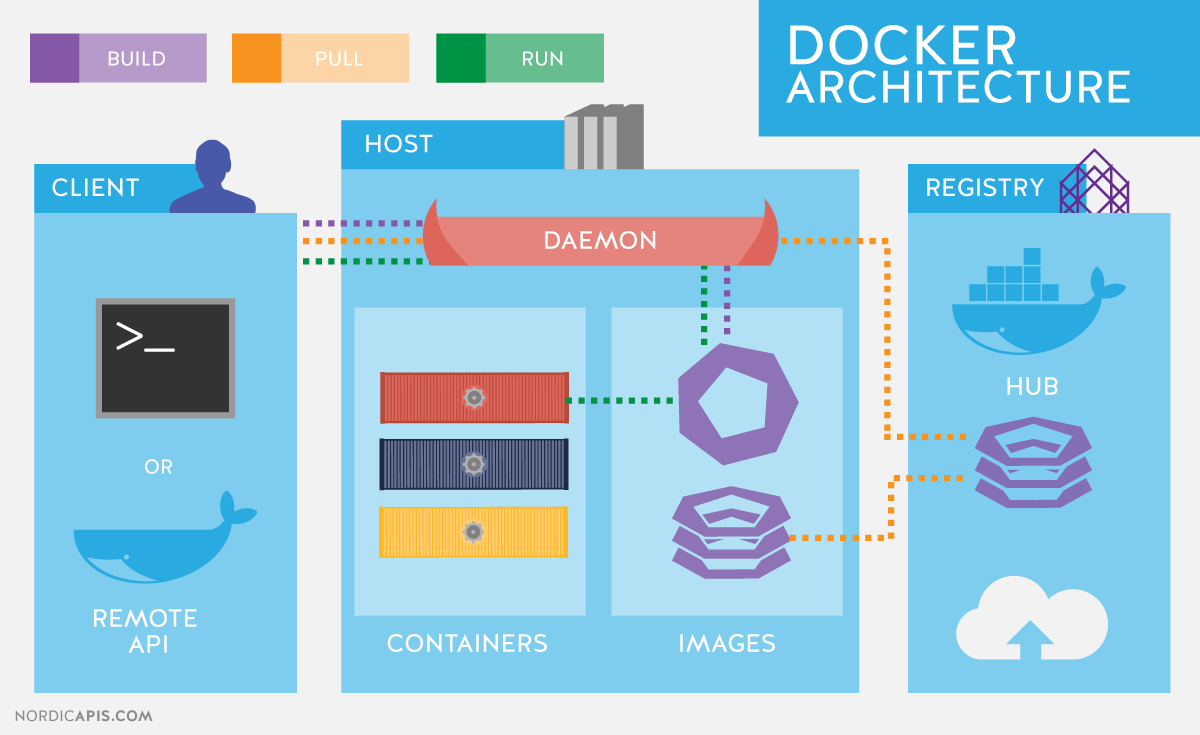

Docker architecture

- Client-server architecture with Docker daemon managing containers

- Docker client communicates with daemon through REST API

- Registries store Docker images (Docker Hub, private registries)

- Containerd handles container runtime operations

- RunC executes containers adhering to OCI (Open Container Initiative) specifications

Docker components

- Docker Engine core component responsible for building and running containers

- Docker CLI provides command-line interface for interacting with Docker

- Docker Desktop offers GUI for managing Docker on Windows and macOS

- Docker Compose tool for defining and running multi-container applications

- Docker Swarm native clustering and orchestration solution for Docker

Docker workflow

- Build images from Dockerfiles or pull pre-built images from registries

- Create and run containers from images

- Manage container lifecycle (start, stop, restart, remove)

- Push and pull images to/from registries for sharing and deployment

- Compose multi-container applications using Docker Compose

Docker images

- Docker images serve as blueprints for creating containers in data science projects

- Enable version control of entire analysis environments, enhancing reproducibility

- Facilitate sharing of complete data science setups among collaborators

Image creation

- Build images using Dockerfiles specifying instructions for image construction

- Layer-based architecture allows efficient storage and transfer of images

- Utilize base images as starting points (Ubuntu, Alpine, Python)

- Incorporate application code, dependencies, and configuration into images

- Leverage multi-stage builds to optimize image size and security

Dockerfile syntax

FROMspecifies base imageRUNexecutes commands in a new layerCOPYandADDtransfer files from host to imageWORKDIRsets working directory for subsequent instructionsENVsets environment variablesEXPOSEinforms Docker about container's network portsCMDprovides default command for running the container

Image management

- Tag images for version control and organization

- Push images to registries for storage and distribution

- Pull images from registries to local systems

- Use image layers efficiently to minimize storage and transfer times

- Implement image scanning for security vulnerabilities

Docker containers

- Docker containers encapsulate entire data science environments, ensuring consistency across different systems

- Enable isolated execution of statistical analyses, preventing conflicts between projects

- Facilitate easy replication and sharing of data science workflows

Container lifecycle

- Create containers from images using

docker runcommand - Start, stop, and restart containers as needed

- Pause and unpause container execution

- Attach to running containers for interactive access

- Remove containers when no longer needed

- Implement container health checks for monitoring

Container networking

- Bridge network default for container communication

- Host network mode for using host's network stack

- Overlay networks for multi-host communication

- User-defined networks for isolating container groups

- Port mapping to expose container services to host

- DNS resolution for container name-based communication

Container storage

- Volumes provide persistent storage independent of container lifecycle

- Bind mounts link host directories to container filesystems

- tmpfs mounts for temporary in-memory storage

- Data-only containers for sharing data between containers

- Storage drivers manage how images and containers are stored

- Implement backup and restore strategies for container data

Docker commands

- Docker CLI commands form the foundation for managing containerized data science environments

- Enable efficient control of container lifecycle, image management, and system resources

- Facilitate debugging and troubleshooting of containerized statistical applications

Basic Docker CLI

docker versiondisplays Docker version informationdocker infoshows system-wide Docker informationdocker loginauthenticates with Docker registrydocker eventsstreams real-time Docker eventsdocker system pruneremoves unused Docker objects

Container manipulation

docker runcreates and starts a new containerdocker execexecutes a command in a running containerdocker logsfetches container logsdocker inspectdisplays detailed container informationdocker statsshows live container resource usage statistics

Image manipulation

docker buildbuilds an image from a Dockerfiledocker pulldownloads an image from a registrydocker pushuploads an image to a registrydocker tagcreates a new tag for an imagedocker rmiremoves one or more images

Docker Compose

- Docker Compose simplifies management of multi-container data science applications

- Enables definition of complex statistical analysis environments with multiple interdependent services

- Facilitates reproducibility by capturing entire application stack configurations in a single file

Multi-container applications

- Define and run multi-container Docker applications

- Simplify complex setups (databases, web servers, analytics tools)

- Manage service dependencies and startup order

- Scale services independently

- Share data between containers using named volumes

Compose file structure

- YAML format for defining multi-container applications

versionspecifies Compose file format versionservicesdefines individual containers and their configurationsnetworksspecifies custom networks for container communicationvolumesdefines named volumes for persistent data storageconfigsandsecretsmanage application configurations and sensitive data

Compose commands

docker-compose upcreates and starts containersdocker-compose downstops and removes containersdocker-compose pslists running containersdocker-compose logsviews output from containersdocker-compose execruns commands in running containers

Docker in data science

- Docker revolutionizes data science workflows by providing consistent, reproducible environments

- Enhances collaboration among researchers by ensuring uniform execution conditions

- Simplifies deployment of complex statistical models and machine learning pipelines

Data science workflows

- Encapsulate entire data analysis environments (Python, R, Julia)

- Version control for data, code, and dependencies

- Simplify package management and dependency resolution

- Enable easy sharing of complete analysis setups

- Facilitate seamless transitions between development and production environments

Reproducibility with Docker

- Capture exact software versions and system configurations

- Eliminate "works on my machine" problems in collaborative projects

- Ensure consistent results across different computing environments

- Simplify replication of published research findings

- Enable long-term preservation of analysis environments

Collaboration using containers

- Share complete data science setups with colleagues

- Standardize development environments across teams

- Simplify onboarding of new team members

- Enable easy testing of different software versions

- Facilitate code reviews with consistent execution environments

Docker best practices

- Implementing Docker best practices enhances security, performance, and maintainability of data science projects

- Ensures efficient use of system resources and streamlines development workflows

- Facilitates seamless integration with existing tools and processes in statistical research

Security considerations

- Use official base images from trusted sources

- Regularly update images to patch vulnerabilities

- Implement least privilege principle for container processes

- Scan images for known security issues

- Use secrets management for sensitive data

- Implement network segmentation for container isolation

Performance optimization

- Minimize image size by using appropriate base images

- Leverage multi-stage builds to reduce final image size

- Optimize Dockerfile instructions to reduce layer count

- Use .dockerignore to exclude unnecessary files

- Implement resource limits for containers

- Utilize Docker's built-in caching mechanisms effectively

Version control integration

- Store Dockerfiles and Compose files in version control systems

- Implement CI/CD pipelines for automated image builds

- Tag images with git commit hashes for traceability

- Use branching strategies for managing different environments

- Implement automated testing of Docker images

Docker ecosystem

- Docker ecosystem provides a rich set of tools and services for managing containerized data science applications

- Enhances scalability and maintainability of large-scale statistical computing environments

- Facilitates integration with cloud services and container orchestration platforms

Docker Hub

- Central repository for sharing and accessing Docker images

- Offers both public and private repositories

- Provides automated builds from source code repositories

- Implements vulnerability scanning for uploaded images

- Offers webhooks for integrating with CI/CD pipelines

Alternative registries

- Amazon Elastic Container Registry (ECR) for AWS integration

- Google Container Registry (GCR) for Google Cloud Platform

- Azure Container Registry (ACR) for Microsoft Azure

- Harbor open-source registry with advanced features

- Quay.io enterprise-grade container registry by Red Hat

Docker Swarm vs Kubernetes

- Docker Swarm native clustering solution for Docker

- Easier setup and management

- Integrated with Docker Engine

- Suitable for smaller deployments

- Kubernetes more powerful container orchestration platform

- Offers advanced scheduling and auto-scaling

- Extensive ecosystem of tools and add-ons

- Better suited for large-scale deployments

Containerization challenges

- Addressing containerization challenges is crucial for successful implementation of Docker in data science projects

- Requires careful consideration of resource allocation, data management, and debugging strategies

- Impacts overall efficiency and reliability of containerized statistical applications

Resource management

- Balancing container resource allocation with host system capabilities

- Implementing CPU and memory limits for containers

- Monitoring resource usage across multiple containers

- Optimizing storage usage for large datasets

- Managing network resources in multi-container applications

Data persistence

- Implementing strategies for persisting data beyond container lifecycle

- Managing data volumes for efficient storage and retrieval

- Ensuring data consistency in distributed container environments

- Implementing backup and recovery procedures for containerized data

- Addressing data security and access control in shared environments

Debugging containerized applications

- Implementing logging strategies for containerized applications

- Utilizing Docker's debugging tools (docker logs, docker exec)

- Implementing health checks for proactive issue detection

- Debugging multi-container applications with Docker Compose

- Leveraging container orchestration platforms for advanced debugging

Future of containerization

- The future of containerization promises further advancements in reproducible and collaborative data science

- Emerging technologies will enhance scalability, security, and efficiency of containerized statistical applications

- Evolving architectures will reshape how data scientists develop and deploy their analyses

Emerging technologies

- WebAssembly (WASM) for more efficient and secure containers

- Unikernels as lightweight alternatives to traditional containers

- Containerization of specialized hardware (GPUs, TPUs) for machine learning

- Advancements in container security (hardware-based isolation)

- Integration of containers with edge computing paradigms

Serverless containers

- Abstracting away infrastructure management for containerized applications

- Pay-per-use model for running containers

- Auto-scaling based on demand

- Reduced operational overhead for data scientists

- Integration with cloud providers' serverless offerings (AWS Fargate, Azure Container Instances)

Microservices architecture

- Decomposing monolithic applications into smaller, independent services

- Enhancing scalability and maintainability of complex data science pipelines

- Enabling language-agnostic development of statistical applications

- Facilitating continuous deployment of individual components

- Improving fault isolation and system resilience in large-scale data analysis projects