Maximum Likelihood Estimators (MLEs) are powerful tools in statistical inference. They possess key properties that make them reliable and efficient for parameter estimation, including consistency, asymptotic normality, and optimality under certain conditions.

Understanding these properties is crucial for data scientists and statisticians. They provide insights into the behavior of MLEs in various scenarios, helping us make informed decisions about when and how to use them effectively in real-world applications.

Asymptotic Properties

Consistency and Asymptotic Normality

- Consistency describes how MLEs converge to true parameter values as sample size increases

- Strong consistency guarantees almost sure convergence to the true parameter value

- Weak consistency ensures convergence in probability to the true parameter value

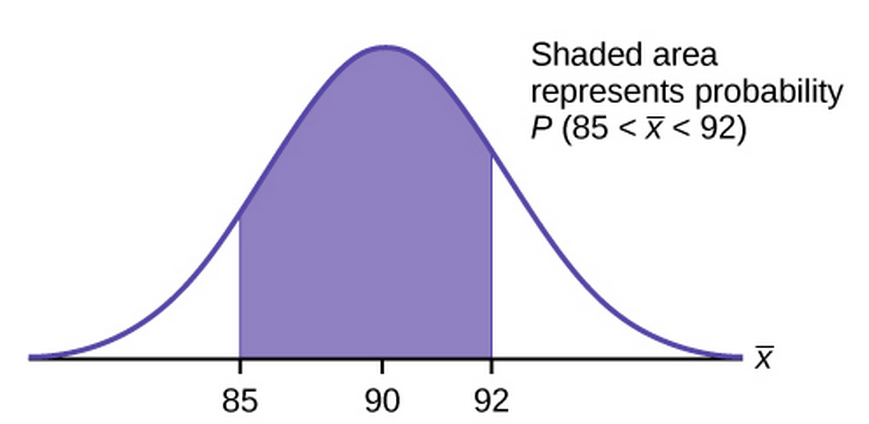

- Asymptotic normality establishes that MLEs follow a normal distribution for large sample sizes

- Central Limit Theorem underpins the asymptotic normality property of MLEs

- Asymptotic normality enables construction of confidence intervals and hypothesis tests for large samples

Asymptotic Variance and Regularity Conditions

- Asymptotic variance measures the spread of the MLE's distribution as sample size approaches infinity

- Inverse of Fisher information matrix often provides the asymptotic variance of MLEs

- Regularity conditions ensure the validity of asymptotic properties for MLEs

- Continuity of the likelihood function serves as a key regularity condition

- Differentiability of the log-likelihood function up to the third order constitutes another important regularity condition

- Boundedness of the expected value of the second derivative of the log-likelihood function rounds out the main regularity conditions

Optimality Properties

Efficiency and Sufficiency

- Efficiency measures how close an estimator's variance comes to the theoretical lower bound

- Fully efficient estimators achieve the minimum possible variance among all unbiased estimators

- Relative efficiency compares the performance of two estimators by examining their variance ratio

- Sufficiency captures all relevant information about a parameter from a given sample

- Sufficient statistics contain all the information needed to estimate a parameter efficiently

- Factorization theorem provides a method to identify sufficient statistics for a given distribution

Fisher Information and Cramer-Rao Lower Bound

- Fisher information quantifies the amount of information a sample provides about an unknown parameter

- Fisher information matrix extends the concept to multiple parameters

- Cramer-Rao lower bound establishes the minimum variance achievable by any unbiased estimator

- CRLB serves as a benchmark for evaluating the efficiency of estimators

- Relationship between Fisher information and CRLB:

- Achieving the CRLB indicates an estimator is the best unbiased estimator for a given parameter

Transformation Properties

Invariance and Bias

- Invariance property allows MLEs to remain valid under certain parameter transformations

- Invariant estimators maintain their properties when applied to functions of the original parameter

- Bias measures the difference between an estimator's expected value and the true parameter value

- Unbiased estimators have an expected value equal to the true parameter value

- Bias-variance tradeoff often necessitates choosing between unbiasedness and lower variance

- Asymptotic unbiasedness describes estimators whose bias approaches zero as sample size increases

Variance and Mean Squared Error

- Variance quantifies the spread of an estimator's sampling distribution around its mean

- Lower variance indicates greater precision and reliability of an estimator

- Mean squared error combines both bias and variance to assess overall estimator performance

- MSE formula:

- Efficient estimators minimize MSE by balancing the tradeoff between bias and variance

- Comparing MSE values helps in selecting the most appropriate estimator for a given problem