Likelihood functions and maximum likelihood estimation (MLE) are crucial tools in statistics. They help us find the best-fitting model parameters for our data. By maximizing the likelihood, we can make informed decisions about which models describe our observations most accurately.

MLE is a powerful technique with wide-ranging applications. From simple probability distributions to complex machine learning algorithms, it helps us estimate parameters, classify data, and make predictions. Understanding MLE is key to unlocking advanced statistical methods and improving our data analysis skills.

Likelihood Function and MLE

Foundations of Likelihood and MLE

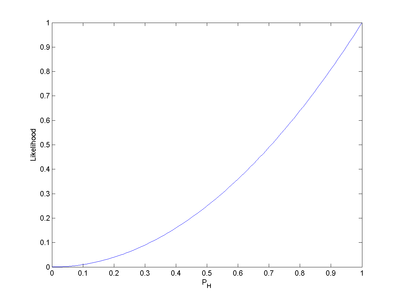

- Likelihood function measures how well a statistical model fits observed data

- Represents probability of observing data given specific parameter values

- Denoted as L(θ|x) where θ represents parameters and x represents observed data

- Maximum likelihood estimation (MLE) finds parameter values that maximize likelihood function

- MLE selects parameters making observed data most probable

- Log-likelihood transforms likelihood function using natural logarithm

- Log-likelihood simplifies calculations and preserves maximum point

- Denoted as l(θ|x) = ln(L(θ|x))

- Score function calculates gradient of log-likelihood with respect to parameters

- Score function helps identify critical points in likelihood function

Practical Applications of MLE

- MLE widely used in statistical inference and machine learning

- Applies to various probability distributions (normal, binomial, Poisson)

- Estimates parameters for regression models (linear, logistic)

- Solves classification problems in machine learning algorithms

- Optimizes parameters in neural networks during training process

- Utilized in time series analysis for forecasting models (ARIMA)

- Employed in survival analysis to estimate hazard functions

Computational Aspects of MLE

- Numerical optimization techniques often required to find MLE

- Gradient descent algorithm uses score function to iteratively approach maximum

- Newton-Raphson method employs both first and second derivatives for faster convergence

- Expectation-Maximization (EM) algorithm handles missing data or latent variables

- High-dimensional parameter spaces may require advanced optimization techniques

- Regularization methods (L1, L2) can be incorporated to prevent overfitting

- Bootstrap resampling estimates confidence intervals for MLE parameters

MLE Properties

Asymptotic Behavior of MLE

- Consistency ensures MLE converges to true parameter value as sample size increases

- Weak consistency implies convergence in probability

- Strong consistency implies almost sure convergence

- Efficiency measures how close MLE variance is to theoretical lower bound

- Asymptotically efficient estimators achieve Cramér-Rao lower bound as sample size approaches infinity

- Asymptotic normality states MLE approximately follows normal distribution for large samples

- Enables construction of confidence intervals and hypothesis tests for large samples

Sufficiency and Information

- Sufficient statistic contains all relevant information about parameter in sample

- MLE based on sufficient statistic preserves all parameter information

- Fisher-Neyman factorization theorem identifies sufficient statistics

- Factorizes probability density function into two parts: one depending on data through sufficient statistic, other independent of parameter

- Complete sufficient statistic guarantees uniqueness of minimum variance unbiased estimator

- Ancillary statistics provide no information about parameter of interest

- Conditional inference uses ancillary statistics to improve estimation precision

Finite Sample Properties

- Bias measures expected difference between estimator and true parameter value

- MLE may be biased for finite samples but asymptotically unbiased

- Consistency does not imply unbiasedness for all sample sizes

- Efficiency in finite samples compares variance of estimator to other unbiased estimators

- Relative efficiency quantifies performance of estimator compared to best possible estimator

- Small sample properties of MLE may differ significantly from asymptotic behavior

- Jackknife and bootstrap methods assess finite sample properties of MLE

MLE Testing and Bounds

Fisher Information and Cramér-Rao Bound

- Fisher information quantifies amount of information sample provides about parameter

- Measures expected curvature of log-likelihood function at true parameter value

- Calculated as negative expected value of second derivative of log-likelihood

- Cramér-Rao lower bound establishes minimum variance for unbiased estimators

- Provides theoretical limit on estimation precision

- Efficient estimators achieve Cramér-Rao bound asymptotically

- Fisher information matrix generalizes concept to multiple parameters

Likelihood Ratio Tests and Confidence Intervals

- Likelihood ratio test compares nested models using likelihood functions

- Test statistic calculated as ratio of maximum likelihoods under null and alternative hypotheses

- Asymptotically follows chi-square distribution under certain regularity conditions

- Wilks' theorem establishes asymptotic distribution of likelihood ratio test statistic

- Profile likelihood method constructs confidence intervals for individual parameters

- Likelihood-based confidence intervals often more accurate than Wald intervals for small samples

- Deviance statistic measures goodness-of-fit in generalized linear models

Wald and Score Tests

- Wald test uses estimated standard errors of MLE to construct test statistic

- Compares squared difference between MLE and hypothesized value to estimated variance

- Score test based on slope of log-likelihood at null hypothesis value

- Requires only fitting model under null hypothesis, computationally efficient

- Lagrange multiplier test equivalent to score test in constrained optimization context

- Asymptotic equivalence of Wald, likelihood ratio, and score tests under null hypothesis

- Each test may perform differently in finite samples or under model misspecification

Alternative Estimation Methods

Method of Moments and Generalizations

- Method of moments equates sample moments to theoretical moments of distribution

- Provides consistent estimators but may be less efficient than MLE

- Generalized method of moments (GMM) extends concept to broader class of estimating equations

- GMM particularly useful in econometrics and time series analysis

- Instrumental variables estimation addresses endogeneity issues using method of moments framework

- Empirical likelihood combines flexibility of nonparametric methods with efficiency of likelihood-based inference

- Quasi-likelihood methods generalize likelihood approach to cases where full probability model is not specified

Bayesian Estimation and Penalized Likelihood

- Bayesian estimation incorporates prior knowledge through probability distributions on parameters

- Posterior distribution combines prior information with likelihood of observed data

- Markov Chain Monte Carlo (MCMC) methods simulate from posterior distribution

- Penalized likelihood adds regularization term to log-likelihood function

- L1 regularization (Lasso) promotes sparsity in parameter estimates

- L2 regularization (Ridge) shrinks parameter estimates towards zero

- Elastic net combines L1 and L2 penalties for improved variable selection and prediction

Robust and Nonparametric Methods

- M-estimators generalize maximum likelihood to provide robust estimates

- Huber's M-estimator combines efficiency of MLE with robustness to outliers

- Minimum distance estimation minimizes discrepancy between empirical and theoretical distributions

- Kernel density estimation provides nonparametric alternative to parametric likelihood methods

- Bootstrap resampling estimates sampling distribution of estimators without parametric assumptions

- Rank-based methods offer distribution-free alternatives to likelihood-based inference

- Generalized estimating equations extend quasi-likelihood to correlated data structures