Visual search is a crucial aspect of perception, involving scanning the environment for specific targets among distractors. It encompasses various types, including feature vs. conjunction search and guided vs. unguided search, each with unique cognitive processes and efficiency levels.

Theories like feature integration and guided search explain how we process visual information during searches. Factors such as target-distractor similarity, set size, and display density influence search performance. Understanding these concepts helps us grasp how we navigate our visual world effectively.

Types of visual search

- Visual search involves scanning the environment for a specific target among distractors

- Different types of visual search vary in their complexity and the cognitive processes involved

Feature vs conjunction search

- Feature search targets defined by a single feature (color, orientation, size)

- Example: Finding a red circle among blue circles

- Conjunction search targets defined by a combination of two or more features

- Example: Finding a red square among red circles and blue squares

- Feature search typically faster and more efficient than conjunction search

- Feature search often parallel processing, conjunction search often serial processing

Guided vs unguided search

- Guided search utilizes prior knowledge or contextual cues to direct attention

- Example: Knowing the target is red narrows down search space

- Unguided search lacks prior knowledge, requiring exhaustive scanning of the environment

- Example: Searching for an unknown target in a cluttered scene

- Guided search more efficient, as it reduces the number of items to be searched

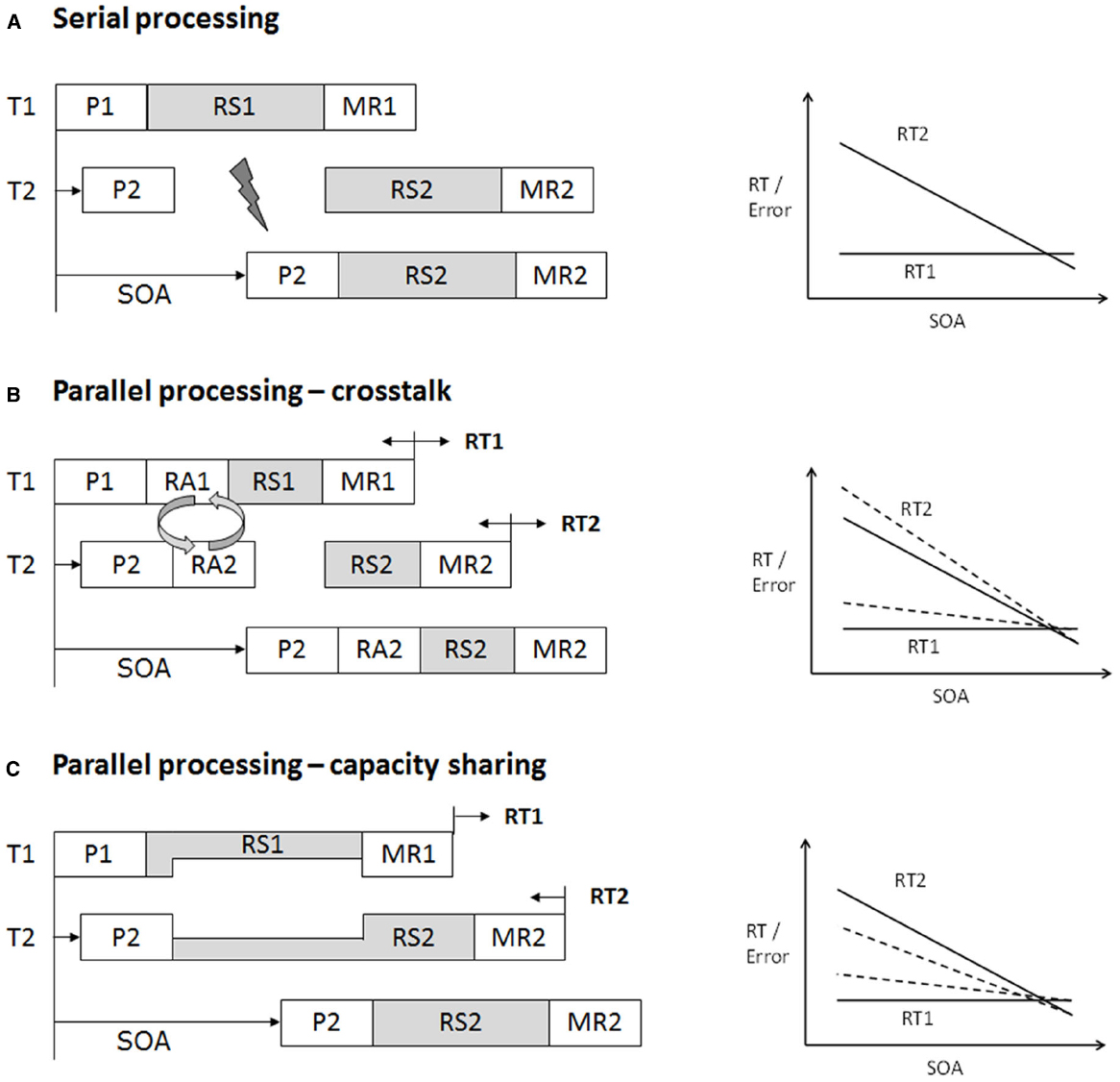

Serial vs parallel processing

- Serial processing items searched one at a time, sequentially

- Reaction time increases linearly with set size

- Typically associated with conjunction search and unguided search

- Parallel processing items searched simultaneously, in parallel

- Reaction time relatively unaffected by set size

- Typically associated with feature search and guided search

- Most visual search tasks involve a combination of serial and parallel processing

Theories of visual search

Feature integration theory

- Proposed by Anne Treisman in the 1980s

- Suggests that features (color, shape, size) initially processed in parallel

- Attention required to bind features into coherent objects

- Explains differences between feature and conjunction search

- Feature search parallel processing of individual features

- Conjunction search serial processing to bind features

Guided search theory

- Proposed by Jeremy Wolfe in the 1990s

- Builds upon feature integration theory

- Suggests that attention guided by preattentive processing of features

- Top-down (goal-driven) and bottom-up (stimulus-driven) factors influence guidance

- Top-down: Knowledge of target features

- Bottom-up: Salience of stimuli based on features

- Explains efficient search for targets defined by multiple features

Attentional engagement theory

- Proposed by Duncan and Humphreys in the 1990s

- Emphasizes the role of similarity between target and distractors

- Greater target-distractor similarity slows search, as more attentional resources required to distinguish them

- Greater distractor-distractor similarity speeds search, as distractors can be grouped and rejected together

- Explains effects of target-distractor similarity and distractor heterogeneity on search efficiency

Factors affecting visual search

Target-distractor similarity

- Higher similarity between target and distractors slows search

- Example: Finding a T among Ls harder than finding a T among Os

- Lower similarity speeds search, as target "pops out" from distractors

- Similarity determined by shared features (color, shape, size, orientation)

Distractor heterogeneity

- Heterogeneous (varied) distractors slow search compared to homogeneous (uniform) distractors

- Example: Finding a red circle among blue, green, and yellow circles harder than among only blue circles

- Heterogeneous distractors require more attentional resources to process and reject

- Homogeneous distractors can be grouped and rejected together

Set size effects

- Increasing the number of items in the display (set size) generally slows search

- Effect more pronounced for conjunction search and unguided search (serial processing)

- Reaction time increases linearly with set size

- Less effect on feature search and guided search (parallel processing)

- Reaction time relatively unaffected by set size

Display density

- Higher display density (items closer together) can slow search

- Crowding effects: Nearby items interfere with target processing

- Example: Finding a target word in densely packed text vs. well-spaced text

- Lower display density (items farther apart) can speed search

- Reduced crowding, easier to isolate and process individual items

Visual search strategies

Systematic vs random scanning

- Systematic scanning involves methodical, orderly search patterns

- Example: Reading a page of text line by line

- More efficient, ensures all areas of the display are searched

- Random scanning involves haphazard, unstructured search patterns

- Example: Glancing around a room without a specific plan

- Less efficient, may result in missing the target or searching the same area multiple times

Perceptual grouping

- Grouping similar items together based on Gestalt principles (proximity, similarity, continuity, closure)

- Example: Grouping rows or columns of items in a grid

- Allows for more efficient rejection of distractor groups

- Can also lead to inefficient search if target grouped with distractors

Saccadic eye movements

- Rapid, ballistic eye movements that shift gaze between fixation points

- Occur 3-4 times per second during visual search

- Guided by both bottom-up (salience) and top-down (goals) factors

- Larger saccades cover more of the display but may skip over targets

- Smaller saccades more thorough but slower

Covert vs overt attention

- Covert attention: Shifting attention without moving the eyes

- "Looking out of the corner of your eye"

- Allows for monitoring of the periphery during fixations

- Overt attention: Shifting attention by moving the eyes (saccades)

- Brings items of interest into foveal vision for detailed processing

- Both covert and overt attention play a role in guiding visual search

Neural mechanisms of visual search

Frontal eye fields

- Located in the prefrontal cortex

- Involved in controlling eye movements (saccades) during visual search

- Sends signals to superior colliculus to initiate saccades

- Also involved in covert attention shifts

Superior colliculus

- Midbrain structure involved in saccade generation

- Receives input from frontal eye fields and visual cortex

- Represents a "priority map" of the visual field

- Combines bottom-up (salience) and top-down (goals) information

- Guides saccades to the most salient or task-relevant locations

Posterior parietal cortex

- Involved in spatial attention and representation of the visual field

- Contains multiple retinotopic maps of the environment

- Integrates information about object features and locations

- Guides attention to relevant locations during visual search

Occipitotemporal cortex

- Includes visual areas such as V4 and the lateral occipital complex (LOC)

- Involved in processing object features (color, shape, texture)

- Modulated by attention during visual search

- Enhanced processing of attended features and objects

- Interaction with frontal and parietal areas guides attention to relevant features

Applications of visual search

Radiology and medical imaging

- Radiologists search for abnormalities (tumors, fractures) in medical images (X-rays, CT scans, MRIs)

- Requires detecting subtle targets among complex, cluttered backgrounds

- Guided by knowledge of anatomy and disease appearance

- Errors can have serious consequences for patient care

Airport security screening

- Security officers search for prohibited items (weapons, explosives) in luggage X-rays

- Time pressure and high volume of items screened

- Aided by computer algorithms that highlight potential threats

- False alarms can slow down the screening process

Human-computer interaction

- Designing user interfaces that facilitate efficient visual search

- Arranging icons, menus, and buttons for easy scanning

- Using color, size, and spacing to highlight important items

- Poorly designed interfaces can lead to frustration and errors

- Example: Finding the desired app on a cluttered smartphone screen

Advertising and marketing

- Designing ads and product packaging that stand out from competitors

- Using salient colors, shapes, and slogans to attract attention

- Placing key information (brand name, price) in prominent locations

- Goal is to guide consumer attention to the advertised product

- Example: Finding a specific brand of cereal on a crowded supermarket shelf

Individual differences in visual search

Expertise and training effects

- Experts in a domain (radiologists, airport security screeners) often show superior visual search performance compared to novices

- More efficient search strategies

- Better knowledge of target features and likely locations

- Training can improve visual search performance

- Learning to prioritize relevant features and ignore distractors

- Developing systematic scanning patterns

Age-related changes

- Visual search performance tends to decline with age

- Slower reaction times and higher error rates

- Particularly for complex tasks (conjunction search, unguided search)

- May be due to general slowing of cognitive processing or reduced visual acuity

- Can be partially compensated for by experience and strategy use

Attentional disorders (e.g., ADHD)

- Individuals with attentional disorders may show impaired visual search performance

- Difficulty maintaining focus on the task

- Increased distractibility by irrelevant stimuli

- May benefit from cues or prompts to guide attention

- Medication (stimulants) can improve attentional control and search efficiency

Cultural differences

- Some studies suggest cultural differences in visual search patterns and strategies

- East Asians tend to have a more holistic, context-dependent processing style

- May excel at detecting targets among heterogeneous distractors

- Westerners tend to have a more analytic, object-focused processing style

- May excel at detecting salient targets among homogeneous distractors

- East Asians tend to have a more holistic, context-dependent processing style

- Differences may reflect cultural variations in perceptual and attentional processes

- Important to consider in designing interfaces and displays for global audiences