Convergence of the Bisection Method

Fundamental Concepts of Convergence

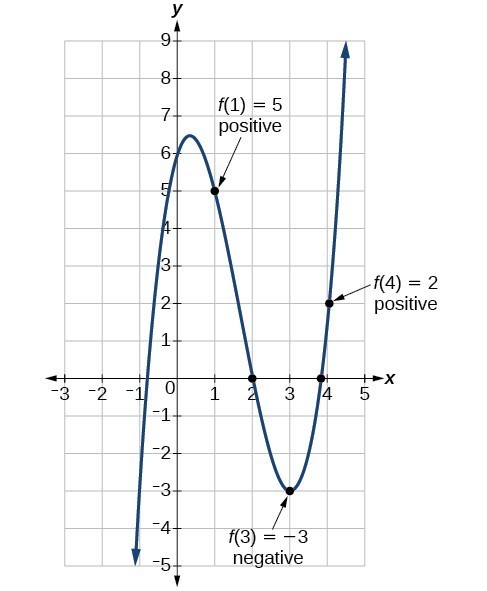

In numerical analysis, a method converges if its approximations approach the true solution as the number of iterations increases. The bisection method is guaranteed to converge to a root of any continuous function on an interval , provided and have opposite signs.

This guarantee comes from the Intermediate Value Theorem: if a continuous function takes opposite signs at two endpoints, it must cross zero somewhere between them. Each bisection step cuts the interval in half, producing a nested sequence of intervals that shrinks down to the root.

The bisection method has linear convergence, meaning the error is roughly halved with each iteration. More precisely, the relationship is:

where is the th midpoint approximation and is the true root.

Mathematical Properties and Implications

- The method always converges for continuous functions on a valid interval. No exceptions.

- The convergence rate stays constant at per step, regardless of how the function behaves. Compare this to Newton's method, whose convergence rate depends heavily on the function.

- Because convergence is guaranteed, bisection is often used to generate a good starting approximation for faster methods like Newton's.

- The downside: linear convergence is slow. You need roughly 3.3 additional iterations for each extra decimal digit of accuracy (since ).

- For computationally expensive function evaluations, this slow rate can become a real bottleneck.

Iterations for Desired Accuracy

Deriving the Iteration Formula

The key question is: how many iterations do you need to guarantee a certain accuracy? Here's the derivation, step by step.

-

You start with an interval of length .

-

After iterations, the interval has been halved times, so its length is .

-

The root lies somewhere in this interval, so the midpoint approximation is at most half the interval length away from the root. That gives an error bound of .

-

To guarantee this error is at most , you need:

-

Rearranging:

-

Taking of both sides:

-

Since must be a whole number, apply the ceiling function:

You'll also see this written more simply as , which gives a slightly conservative count but is easier to remember.

Example: Suppose and you want accuracy . Then , so you need at least 21 iterations.

Practical Applications and Considerations

- This formula lets you know the computational cost before you start iterating. That's valuable for real-time systems or when function evaluations are expensive.

- Notice the relationship is logarithmic: doubling the desired accuracy (halving ) costs only one extra iteration, not double the work.

- The formula also makes it easy to compare bisection's cost against other methods for a specific problem.

- In practice, you can use this count to set a reasonable maximum iteration limit in your code.

Rate of Convergence Analysis

Characteristics of Linear Convergence

The bisection method has order of convergence equal to 1 (linear convergence). The error satisfies:

where is the error at iteration and .

What does "linear" actually mean here? The error shrinks by a constant factor each step. Contrast this with quadratic convergence (like Newton's method), where the number of correct digits roughly doubles each step. With bisection, you gain about one binary digit of accuracy per iteration, which translates to roughly one decimal digit every 3.3 iterations.

This constant, predictable rate is both a strength and a weakness:

- Strength: Performance is completely predictable. You always know how many steps you need.

- Weakness: For high-precision work (say, 15 decimal digits), you might need 50+ iterations, while Newton's method could get there in 5-6.

Comparative Analysis and Implications

- Bisection's reliability makes it a natural "safety net." Many production algorithms start with a few bisection steps to get close, then switch to a faster method.

- For highly nonlinear or poorly behaved functions where Newton's method might oscillate or diverge, bisection just keeps working.

- The constant convergence rate means performance doesn't degrade for difficult functions. You get the same error reduction whether the function is nearly linear or wildly oscillating.

- In robust algorithm design, the trade-off between guaranteed convergence and speed often favors using bisection as a fallback.

Error Bounds and Stopping Criteria

Error Estimation Techniques

There are two main ways to estimate the error during bisection:

A priori bound (known before you start): After iterations, the error satisfies:

This bound depends only on the initial interval and the iteration count. It tells you the worst-case error.

A posteriori estimate (computed during iteration): The relative difference between successive approximations gives a practical error estimate:

This is useful because it doesn't require knowing the true root . However, it's an estimate, not a guaranteed bound.

Both techniques let you monitor accuracy as you go and decide when to stop.

Implementing Effective Stopping Criteria

In practice, you'll typically combine multiple stopping criteria. The iteration stops when any of these conditions is met:

-

Interval tolerance: (the half-interval is smaller than your desired accuracy)

-

Function value test: for some small (the midpoint is close to being an actual root)

-

Maximum iterations: (prevents infinite loops if something goes wrong)

A few things to watch out for:

- Floating-point limits: On a computer, you can't achieve accuracy beyond machine epsilon (about for double precision). Setting smaller than this is pointless.

- The function value test alone can be misleading. If the function is very steep near the root, might be large even when is close to . Conversely, if the function is very flat, can be tiny even when is far from the root.

- Always include a maximum iteration count as a failsafe, even if you've calculated the expected number of iterations in advance.

Bisection Method vs Other Algorithms

Convergence Rate Comparison

| Method | Convergence Order | Error Behavior |

|---|---|---|

| Bisection | 1 (linear) | Error halved each step |

| Newton's | 2 (quadratic) | Correct digits roughly double each step |

| Secant | ≈ 1.618 (superlinear) | Faster than linear, slower than quadratic |

| Fixed-point iteration | Varies | Depends on the chosen iteration function |

Newton's method typically reaches a given accuracy in far fewer iterations than bisection. The secant method falls between the two. But these faster methods come with trade-offs in reliability.

Reliability and Applicability Analysis

- Bisection guarantees convergence for any continuous function with a sign change. No other common method offers this level of reliability.

- Newton's method can fail to converge if the initial guess is poor, if the derivative is near zero, or if the function has inflection points near the root.

- Secant method avoids computing derivatives (an advantage for complex functions) but can still diverge for bad starting values.

- Fixed-point methods may converge faster than bisection when they work, but they can also diverge entirely depending on the iteration function chosen.

- Hybrid methods like Brent's method combine bisection's guaranteed convergence with the speed of inverse quadratic interpolation. These are often the best practical choice and are what most numerical libraries use internally.

The right method depends on what you know about your function, how much accuracy you need, and whether you can tolerate the possibility of non-convergence.