Partial Derivatives

Partial derivatives of multivariable functions

A partial derivative measures how a function changes when you nudge one variable while freezing all the others. Think of it like asking: "If I only change , how does the output respond?"

Notation: You'll see partial derivatives written several ways, all meaning the same thing:

How to compute a partial derivative:

- Pick the variable you're differentiating with respect to.

- Treat every other variable as a constant.

- Differentiate using the single-variable rules you already know.

Quick examples:

- : Taking , treat as a constant. You get . For , treat as a constant, giving .

- : By the chain rule, and .

- : Both partials give , since the derivative of with respect to either variable is 1.

The chain rule extends naturally to partial derivatives for composite functions. If depends on and , which themselves depend on and , you chain the partials together. Implicit differentiation also carries over: if a relationship like defines implicitly, you can differentiate both sides with respect to (treating as a function of ) to find .

Interpretation of partial derivatives

Geometrically, is the slope of the tangent line to the surface at the point , sliced along the direction of the -axis (with held fixed). Similarly, gives the slope along the -direction.

Partial derivatives connect directly to directional derivatives, which measure rates of change in any direction, not just along the coordinate axes.

Applications show up everywhere:

- Physics: Velocity components in 3D motion, where position depends on time and spatial coordinates.

- Economics: Marginal cost or marginal revenue, measuring how cost changes when you adjust one input (like labor) while holding others fixed.

- Sensitivity analysis: Determining which variable has the biggest impact on the output.

The linear approximation formula ties this together practically. For small changes and :

This tells you that the total change in is approximately the sum of each partial derivative times the corresponding small change. It's the multivariable version of the single-variable approximation .

The Gradient and Higher-Order Derivatives

Higher-order partial derivatives

Just like in single-variable calculus, you can differentiate more than once. Second-order partial derivatives come in two flavors:

- Unmixed: Differentiate twice with respect to the same variable, e.g.,

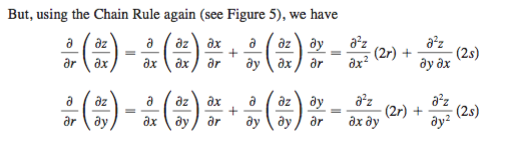

- Mixed: Differentiate with respect to different variables, e.g., (differentiate first with respect to , then )

Clairaut's Theorem: If and are both continuous, then . In practice, this holds for nearly every function you'll encounter in this course, so the order of mixed partials usually doesn't matter.

What second-order partials tell you:

- at a point means the function is concave up in the -direction there; means concave down.

- Mixed partials capture how the slope in one direction changes as you move in the other direction.

- These derivatives are essential for the second derivative test in optimization and for building Taylor series expansions of multivariable functions.

Gradient vector computation and geometry

The gradient packages all the partial derivatives of a function into a single vector:

For a function of two variables, drop the -component.

Key properties of the gradient:

- It points in the direction of steepest ascent of . If you're standing on a hillside and want to go uphill as fast as possible, walk in the direction of .

- Its magnitude gives the rate of steepest ascent. A large magnitude means the function is changing rapidly.

- It is perpendicular to level curves (in 2D) and level surfaces (in 3D). This is why the gradient provides a normal vector to a surface at any point.

Connection to directional derivatives: The directional derivative of in the direction of a unit vector is:

This dot product is maximized when points in the same direction as (confirming the steepest ascent property) and equals zero when is tangent to a level curve (no change along a contour).

Where the gradient shows up:

- Optimization: Setting finds critical points where the function could have a maximum, minimum, or saddle point.

- Conservative vector fields: A vector field is conservative if for some scalar function . One test for this is checking that the curl of is zero.