Steepest descent is a fundamental optimization method that moves towards the minimum of a function by following the negative gradient. It's simple yet powerful, making it a cornerstone of many optimization algorithms used in machine learning and other fields.

While steepest descent can be effective, it has limitations. It may converge slowly for ill-conditioned problems and struggle with saddle points. Understanding its strengths and weaknesses is crucial for applying it effectively and knowing when to use more advanced gradient-based methods.

Steepest Descent Optimization

Concept and Motivation

- Steepest descent method iteratively finds local minima of differentiable functions

- Moves in direction of negative gradient representing steepest descent at each point

- Updates current solution by taking steps proportional to negative gradient

- Useful for unconstrained optimization problems with continuous, differentiable objective functions

- Simplicity and low computational cost per iteration make it attractive for large-scale problems

- Applies to both convex and non-convex optimization problems (may converge to local minima in non-convex cases)

- Assumes moving in direction of steepest descent leads to function minimum

Applications and Considerations

- Particularly effective for quadratic or nearly quadratic objective functions

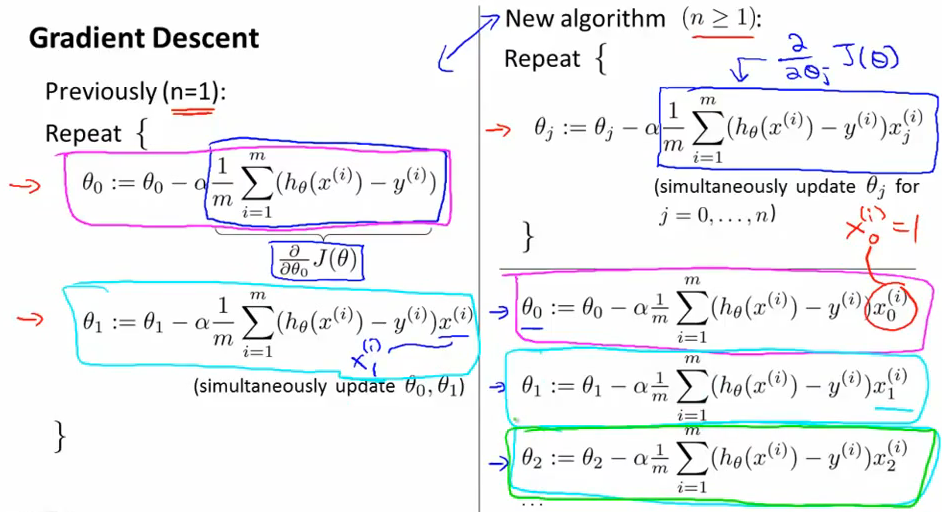

- Widely used in machine learning for training neural networks (backpropagation)

- Applied in image processing for denoising and image reconstruction

- Utilized in control systems for parameter optimization

- Employed in financial modeling for portfolio optimization

- Considerations when using steepest descent

- Function landscape (convexity, smoothness)

- Problem dimensionality

- Computational resources available

- Required accuracy of solution

Implementing Steepest Descent

Algorithm Components

- Compute gradient of objective function at each iteration

- Select initial starting point (random initialization or domain-specific heuristics)

- Specify stopping criterion (maximum iterations or change in function value tolerance)

- Determine step size (learning rate) affecting convergence

- Fixed step sizes (simple but may lead to slow convergence)

- Adaptive step size methods (line search, trust region strategies)

- Basic update rule

- current point

- step size

- gradient at

Implementation Techniques

- Approximate gradients using numerical techniques (finite difference methods)

- Include safeguards against numerical instabilities

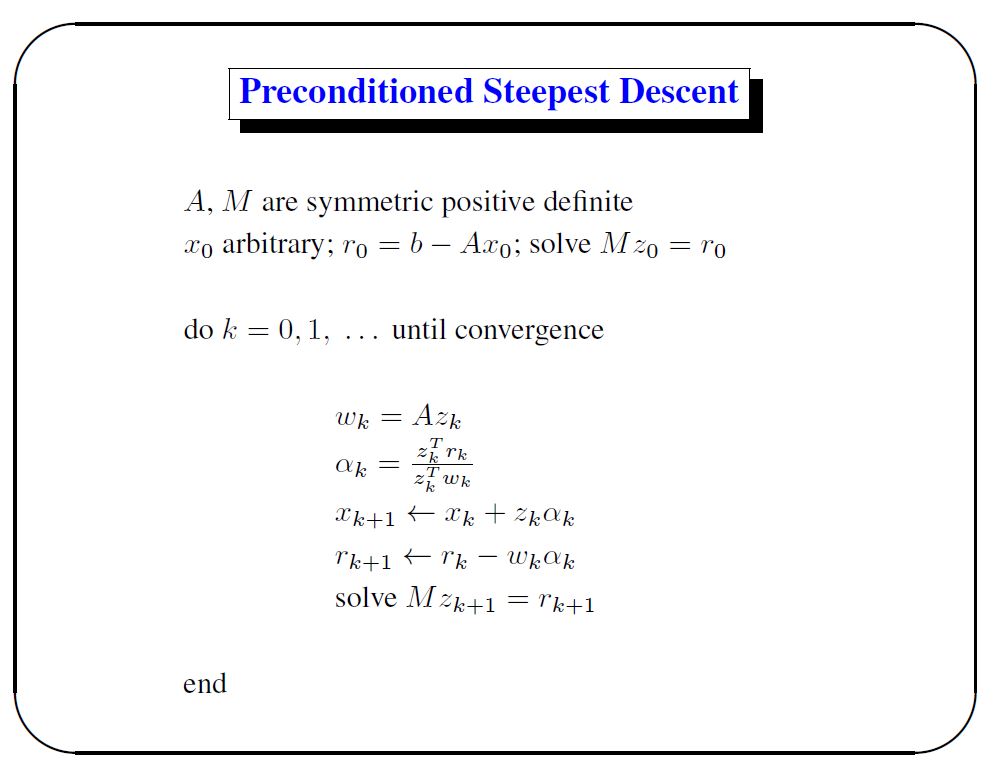

- Handle ill-conditioned problems (preconditioning, regularization)

- Use vectorized implementations for high-dimensional problems

- Implement backtracking line search for adaptive step size

- Start with large step size

- Reduce until sufficient decrease condition met

- Incorporate momentum terms to accelerate convergence

- Implement early stopping to prevent overfitting in machine learning applications

Convergence Properties of Steepest Descent

Convergence Characteristics

- Linear convergence rate (slower than higher-order methods)

- Guaranteed convergence for strictly convex functions with Lipschitz continuous gradients

- Zigzag phenomenon slows convergence in ill-conditioned problems

- Takes steps nearly orthogonal to optimal direction

- Results in inefficient path to optimum

- Performance sensitive to objective function scaling

- Convergence rate depends on Hessian matrix condition number at optimum

- Quadratic functions worst-case convergence rate related to Hessian eigenvalue ratio

- Struggles with saddle points and plateau regions

Factors Affecting Convergence

- Initial starting point selection impacts convergence speed

- Step size choice critical for balancing convergence speed and stability

- Too large may cause overshooting and divergence

- Too small leads to slow convergence

- Problem dimensionality affects convergence rate

- Higher dimensions generally require more iterations

- Function curvature influences convergence behavior

- Highly curved regions may cause slow progress

- Noise in gradient estimates can impact convergence (stochastic settings)

Steepest Descent vs Other Techniques

Comparison with Newton's Method

- Steepest descent less efficient than Newton's method for smooth, well-conditioned problems

- Newton's method requires second-order derivative information (Hessian matrix)

- Steepest descent more suitable for large-scale problems where Hessian computation prohibitive

- Newton's method converges quadratically near optimum, steepest descent linearly

- Steepest descent more robust to initial point selection

Comparison with Other Gradient-Based Methods

- Conjugate gradient methods often outperform steepest descent

- Use information from previous iterations to improve search direction

- Particularly effective for quadratic functions

- Momentum-based variants improve convergence rates

- Heavy ball method adds momentum term to update rule

- Nesterov's accelerated gradient uses "look-ahead" step

- Stochastic gradient descent preferred for large-scale machine learning

- Handles noisy gradients and large datasets efficiently

- Mini-batch processing allows for frequent updates

Applicability to Different Problem Types

- Steepest descent effective for smooth, unconstrained optimization

- Subgradient methods more appropriate for non-smooth optimization

- Proximal gradient methods handle composite optimization problems

- Trust region methods provide better global convergence properties

- Quasi-Newton methods (BFGS, L-BFGS) offer superlinear convergence without explicit Hessian computation