Probability mass functions (PMFs) are the backbone of discrete random variables. They assign probabilities to specific outcomes, helping us understand the likelihood of different events. PMFs are crucial for calculating probabilities, expected values, and variances in discrete scenarios.

In this part of the chapter, we'll learn how to define, construct, and use PMFs. We'll also explore their relationship with cumulative distribution functions (CDFs) and see how they apply to real-world problems involving discrete random variables.

Probability Mass Functions

Definition and Key Properties

- Probability mass function (PMF) assigns probabilities to discrete random variable outcomes

- Defined exclusively for discrete random variables with countable outcome sets

- Sum of all probabilities in a PMF equals 1, representing total probability

- PMFs always non-negative, assigning probabilities ≥ 0 to each outcome

- Support of a PMF encompasses all possible values with non-zero probability

- Adheres to probability axioms

- Non-negativity: All probabilities ≥ 0

- Additivity: Probability of union of disjoint events equals sum of individual probabilities

- Normalization: Total probability equals 1

Mathematical Representation and Examples

- Typically expressed as where X represents the random variable and x specific value

- Bernoulli distribution PMF example: p & \text{if } x = 1 \\ 1-p & \text{if } x = 0 \end{cases}$$

- Binomial distribution PMF example: where n trials, x successes, p probability of success

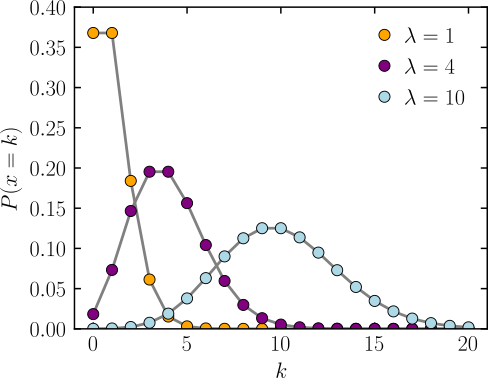

- Poisson distribution PMF example: where λ average rate of events

Calculating Probabilities with PMFs

Single and Multiple Outcome Probabilities

- Calculate single outcome probability by evaluating PMF at specific value

- Compute probability for outcome ranges by summing PMF values over desired outcomes

- Example: Fair six-sided die

- (single outcome)

- (multiple outcomes)

- Use multiplication for independent events involving multiple random variables

- Apply conditional probability formulas for dependent events

Expected Value and Variance Calculations

- Compute expected value (mean) using PMF

- Calculate variance using PMF and expected value

- Derive standard deviation as square root of variance

- Example: Expected value for fair six-sided die

Constructing PMFs

Methods for PMF Construction

- Identify all possible outcomes of discrete random variable

- Assign non-negative probabilities to each outcome, ensuring sum equals 1

- Express PMF as function, often using piecewise notation for different variable ranges

- Construct empirical PMF from data by calculating relative frequencies of observed outcomes

- Example: Construct PMF for sum of two fair six-sided dice \frac{1}{36} & \text{if } x = 2 \text{ or } x = 12 \\ \frac{2}{36} & \text{if } x = 3 \text{ or } x = 11 \\ \frac{3}{36} & \text{if } x = 4 \text{ or } x = 10 \\ \frac{4}{36} & \text{if } x = 5 \text{ or } x = 9 \\ \frac{5}{36} & \text{if } x = 6 \text{ or } x = 8 \\ \frac{6}{36} & \text{if } x = 7 \end{cases}$$

Verification and Considerations

- Verify constructed PMF satisfies all valid probability mass function properties

- Ensure non-negativity of all probabilities

- Confirm sum of all probabilities equals 1

- Consider underlying distributions informing PMF shape (uniform, normal, exponential)

- Examine symmetry or patterns in data to guide PMF construction

- Example: Verify PMF for sum of two dice

PMFs vs Cumulative Distribution Functions

Relationship and Conversion

- Derive cumulative distribution function (CDF) from PMF by summing probabilities of values less than or equal to given value

- CDF approaches 1 as random variable increases, non-decreasing function

- Obtain PMF from CDF by taking difference between consecutive CDF values

- Example: CDF for fair coin toss (H = 1, T = 0) 0 & \text{if } x < 0 \\ \frac{1}{2} & \text{if } 0 \leq x < 1 \\ 1 & \text{if } x \geq 1 \end{cases}$$

Characteristics and Applications

- PMF provides probabilities for exact values, CDF for values less than or equal to given value

- CDF continuous from right, including probability of current value

- CDF for discrete random variables step function, jumps occur at PMF support values

- Use CDF to calculate probabilities of ranges efficiently

- Example: Calculate probability of rolling 3 or less on fair die using CDF