Replication and documentation are crucial in econometrics. They ensure research validity, promote transparency, and allow others to build on existing knowledge. By verifying findings and subjecting studies to scrutiny, these practices enhance credibility and reliability in the field.

Key principles of replicable research include transparency, reproducibility, accessibility, and thorough documentation. Researchers must provide clear information about data sources, methodologies, and analytical procedures. This enables independent verification and fosters a culture of collaboration and accountability.

Importance of replication in econometrics

- Replication serves as a cornerstone of scientific research, allowing independent researchers to verify the validity and accuracy of published findings

- Enables the scientific community to build upon existing knowledge by confirming, extending, or challenging previous results

- Enhances the credibility and reliability of econometric studies by subjecting them to rigorous scrutiny and reducing the risk of errors or misconduct

- Promotes transparency and openness in research, fostering a culture of collaboration and accountability within the field of econometrics

Key principles of replicable research

- Transparency: Providing clear and detailed information about data sources, methodologies, and analytical procedures

- Reproducibility: Ensuring that the original results can be reproduced by independent researchers using the same data and code

- Accessibility: Making data, code, and supporting materials readily available to the research community

- Documentation: Providing comprehensive and well-structured documentation to facilitate understanding and replication of the research

Documentation for replicable analysis

README files

- Serve as an introduction and overview of the replication package

- Provide essential information about the purpose, data, software requirements, and instructions for running the analysis

- Act as a roadmap for navigating the replication materials and understanding the structure of the project

Codebooks and data dictionaries

- Provide detailed descriptions of variables, their definitions, and coding schemes

- Help researchers understand the content and structure of the dataset

- Facilitate data cleaning, transformation, and analysis by clarifying variable meanings and relationships

Version control systems

- Enable tracking of changes made to the codebase over time (Git)

- Allow for collaboration and parallel development among multiple researchers

- Provide a record of the evolution of the project and facilitate the identification and resolution of issues

Organizing replication materials

Folder structure and naming conventions

- Establish a clear and logical folder hierarchy to organize data, code, and documentation

- Use descriptive and consistent naming conventions for files and folders to enhance readability and navigation

- Separate different components of the analysis (data, scripts, outputs) to maintain a clean and organized structure

Raw vs processed data

- Distinguish between raw data (original, unaltered datasets) and processed data (cleaned, transformed, or derived datasets)

- Store raw data separately to ensure data integrity and allow for reproducibility of the entire analysis pipeline

- Document any data cleaning, transformation, or aggregation steps applied to the raw data to create the processed datasets

Script organization and modularity

- Break down the analysis into modular and reusable scripts or functions

- Organize scripts based on their purpose or functionality (data cleaning, analysis, visualization)

- Use clear and descriptive names for scripts and functions to enhance readability and maintainability

- Document the purpose, inputs, and outputs of each script or function to facilitate understanding and reuse

Reproducible computing environments

Containerization with Docker

- Encapsulates the entire computing environment, including the operating system, dependencies, and libraries

- Ensures consistency and reproducibility across different machines and platforms

- Eliminates the need for manual setup and configuration of the computing environment

- Enables easy sharing and deployment of the analysis pipeline

Virtual environments

- Create isolated Python or R environments with specific versions of packages and dependencies

- Prevent conflicts between different projects or analyses that require different package versions

- Facilitate reproducibility by ensuring a consistent and controlled computing environment

- Enable easy management and switching between different environments for different projects

Dynamic document generation

Literate programming with R Markdown

- Combines code, documentation, and results in a single document

- Allows for the integration of R code chunks, narrative text, and visualizations

- Enables the generation of dynamic reports, presentations, or websites

- Facilitates reproducibility by embedding the analysis code within the document itself

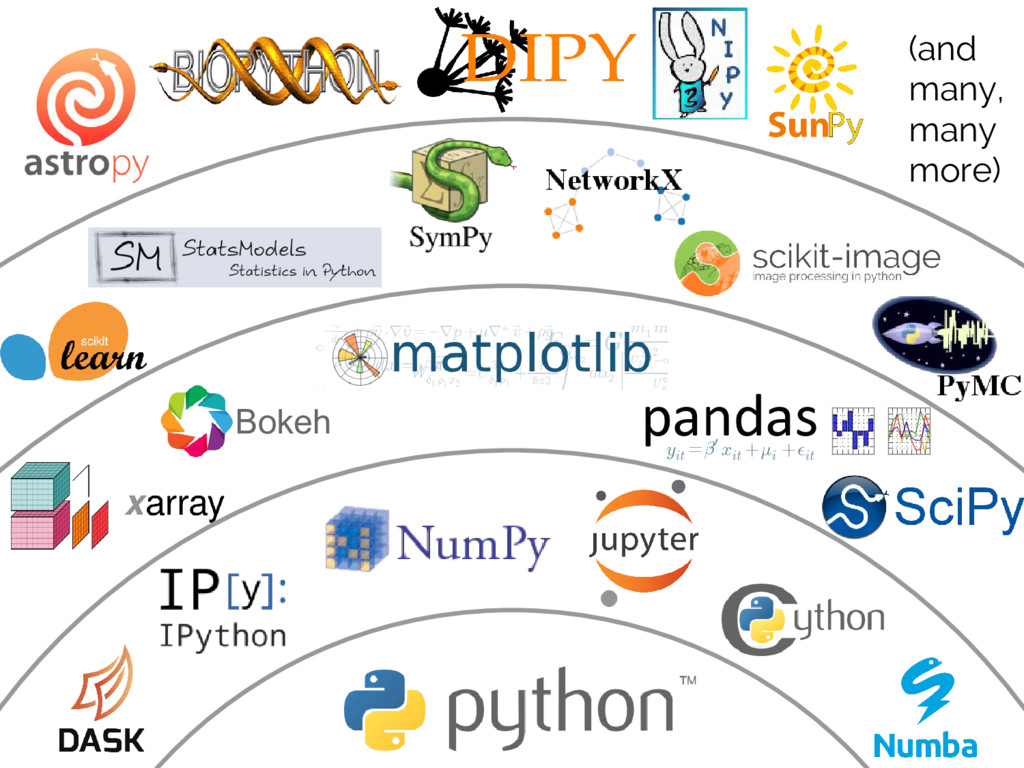

Jupyter Notebooks for Python

- Provide an interactive and web-based environment for combining code, documentation, and outputs

- Support multiple programming languages, including Python, R, and Julia

- Enable the creation of computational narratives that interweave code, explanations, and results

- Facilitate collaboration, sharing, and reproducibility of the analysis

Archiving and sharing replication packages

Data repositories

- Platforms designed for long-term storage and sharing of research data (Dataverse, Zenodo)

- Provide persistent identifiers (DOIs) for datasets, ensuring stable and citable references

- Offer version control and access control features to manage data updates and permissions

- Facilitate data discovery and reuse by providing metadata and search capabilities

Code sharing platforms

- Repositories specifically designed for sharing and collaborating on code (GitHub, GitLab)

- Enable version control, issue tracking, and collaborative development of analysis scripts

- Provide features for documentation, code review, and project management

- Facilitate the sharing and reuse of code by the research community

Replication vs robustness checks

- Replication aims to reproduce the original results using the same data, code, and methods

- Robustness checks involve testing the sensitivity of the results to different assumptions, specifications, or datasets

- Replication focuses on verifying the correctness and reproducibility of the original analysis

- Robustness checks explore the generalizability and stability of the findings under different conditions

- Both replication and robustness checks contribute to the credibility and reliability of econometric research

Addressing confidential data in replication

- Some datasets may contain sensitive or confidential information that cannot be publicly shared

- Researchers should provide detailed documentation on how to obtain access to confidential data

- Consider creating synthetic or anonymized datasets that mimic the structure and properties of the original data

- Provide clear instructions on how to replicate the analysis using the restricted data while ensuring compliance with data protection regulations

- Explore secure computing environments or data enclaves that allow controlled access to confidential data for replication purposes

Pre-analysis plans for transparent research

- Pre-registration of research hypotheses, design, and analysis plans prior to data collection or analysis

- Helps mitigate issues of publication bias, p-hacking, and HARKing (Hypothesizing After Results are Known)

- Enhances transparency by clearly distinguishing between confirmatory and exploratory analyses

- Provides a public record of the original research intentions and reduces the scope for post-hoc modifications

- Increases the credibility and interpretability of research findings by minimizing researcher degrees of freedom

Open science initiatives in economics

- Promote transparency, reproducibility, and accessibility of research

- Encourage pre-registration of studies, data sharing, and open access publication

- Develop guidelines and standards for replicable research practices (TOP Guidelines)

- Foster a culture of openness and collaboration within the economics research community

- Provide infrastructure and support for open science practices (Open Science Framework)

- Advocate for changes in incentive structures and reward systems to recognize and value open science contributions