Sound Perception

Our ears convert sound waves into neural signals that the brain interprets as pitch, loudness, and timbre. Understanding how this works connects the wave physics from earlier units to the biology of hearing.

Key Terms in Sound Perception

Pitch is your perception of a sound's frequency. Higher-frequency sounds (like a flute or whistle) sound higher in pitch, while lower-frequency sounds (like a bass drum or tuba) sound lower. Pitch is subjective, but it maps closely to the measurable frequency of the wave.

Loudness is your perception of a sound's intensity. It depends on both the intensity and the frequency of the sound, which means two sounds with the same intensity can sound different in loudness if they're at different frequencies. More on that below.

Timbre is the characteristic quality that lets you tell apart two instruments playing the same note at the same volume. A guitar and a piano playing the same pitch at the same loudness still sound different because each produces a unique mix of harmonic frequencies (its frequency spectrum).

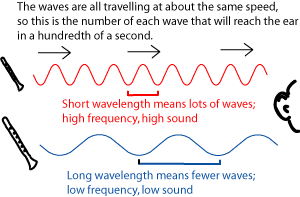

Frequency is the number of wave cycles per second, measured in Hertz (Hz). The human hearing range spans approximately 20 Hz to 20,000 Hz. Higher frequencies correspond to shorter wavelengths, and lower frequencies to longer wavelengths.

Effects of Intensity and Frequency

Sound intensity is measured in decibels (dB), which uses a logarithmic scale. Each 10 dB increase represents a tenfold increase in intensity. A rock concert at ~120 dB is not just "a bit louder" than a normal conversation at ~60 dB; it's about a million times more intense.

Your ears are not equally sensitive at all frequencies. Human hearing is most sensitive between about 2,000 and 5,000 Hz. A sound at 3,000 Hz will be perceived as louder than a sound of equal intensity at 100 Hz. Equal-loudness contours (also called Fletcher-Munson curves) map out this relationship, showing what intensity is needed at each frequency to produce the same perceived loudness.

Perceived loudness is measured in phons, where 1 phon equals 1 dB at 1,000 Hz (the reference frequency). To double the perceived loudness of a sound, you typically need to increase the level by about 10 dB, not just 2 or 3.

Ear Structure and Function

How the Ear Converts Sound to Neural Signals

The ear has three main sections, each with a distinct job:

Outer ear: The pinna (the visible part) and ear canal collect sound waves and funnel them toward the eardrum.

Middle ear: The tympanic membrane (eardrum) vibrates when sound waves hit it. Those vibrations pass through three tiny bones called ossicles: the malleus, incus, and stapes. The ossicles amplify the vibrations and transmit them to the inner ear. This amplification is necessary because the vibrations must transfer from air into the fluid-filled cochlea, and without it, most of the sound energy would be reflected.

Inner ear: The cochlea is a fluid-filled, snail-shaped structure that contains the basilar membrane and thousands of hair cells.

Here's how the cochlea converts vibrations into signals your brain can read:

- Mechanical vibrations from the stapes push on the oval window of the cochlea, creating pressure waves in the cochlear fluid.

- These pressure waves cause the basilar membrane to vibrate. Different frequencies cause peak vibration at different locations along the membrane: high frequencies vibrate the base (near the oval window), and low frequencies vibrate the apex (the far end).

- Hair cells sitting on the basilar membrane bend in response to its vibration.

- That bending triggers the release of neurotransmitters, which generate electrical signals.

- The auditory nerve carries those signals from the hair cells to the brain for processing.

This location-based frequency mapping is called tonotopic organization, and it's the physical basis for how you perceive pitch.

Sound Localization and Processing

Binaural hearing means using both ears together, and it's essential for figuring out where a sound is coming from. Your brain determines a sound's direction by comparing two things between your ears:

- Timing differences: A sound coming from your left reaches your left ear slightly before your right ear. Your brain detects delays as small as about 10 microseconds.

- Intensity differences: The sound is also slightly louder in the closer ear, partly because your head creates an "acoustic shadow" that reduces intensity on the far side. This effect is strongest for higher-frequency sounds.

The auditory cortex, located in the temporal lobe, is the brain region responsible for interpreting all of this information. It analyzes pitch, timbre, spatial location, and complex patterns like speech and music.