Lossy compression techniques are essential tools in digital imaging, balancing file size reduction with acceptable image quality. These methods exploit human visual perception limitations to achieve high compression ratios while maintaining visual appeal. Understanding various lossy compression types is crucial for optimizing image storage and transmission.

From JPEG to wavelet compression, lossy techniques offer diverse approaches to data reduction. Each method has unique strengths, such as JPEG's adjustable quality settings or fractal compression's resolution independence. Mastering these techniques empowers digital imaging professionals to make informed decisions about compression strategies.

Types of lossy compression

- Lossy compression techniques reduce file sizes by discarding some image data, balancing quality and storage efficiency

- These methods exploit limitations in human visual perception to achieve high compression ratios while maintaining acceptable image quality

- Understanding various lossy compression types is crucial for optimizing image storage and transmission in digital imaging applications

JPEG compression

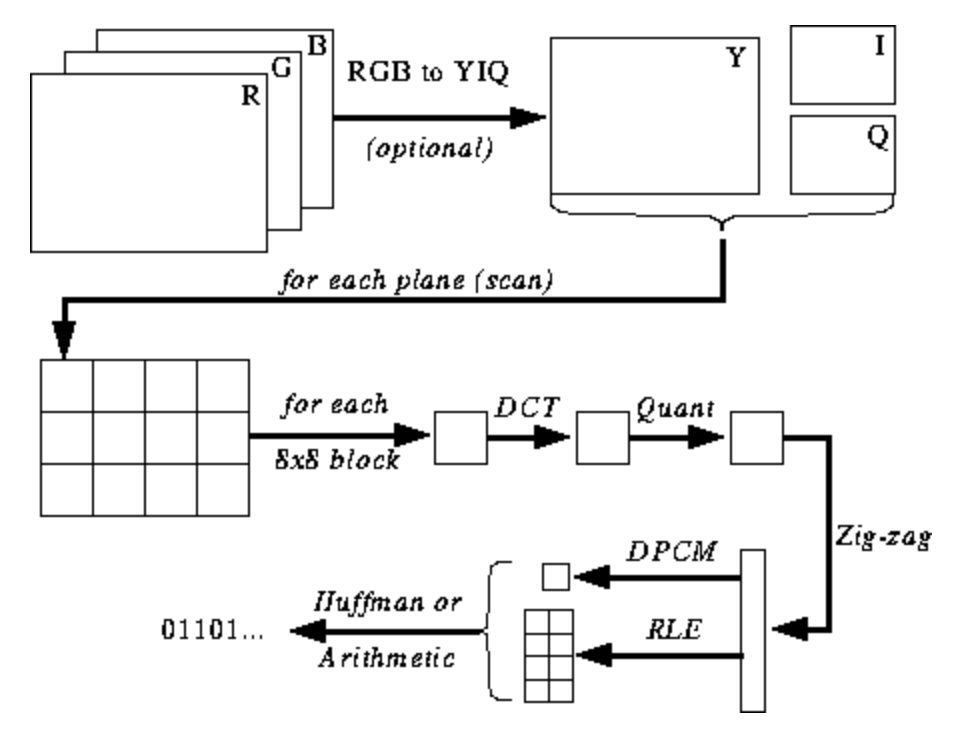

- Utilizes discrete cosine transform (DCT) to convert image data into frequency domain

- Divides image into 8x8 pixel blocks for processing

- Applies quantization to reduce precision of DCT coefficients

- Employs Huffman coding for further compression of quantized data

- Allows adjustable quality settings to balance compression ratio and image fidelity

Fractal compression

- Based on the principle of self-similarity within images

- Represents image as a collection of fractals using iterative function systems (IFS)

- Achieves high compression ratios for certain types of natural images

- Requires significant computational power for encoding process

- Offers fast decompression and resolution independence

Wavelet compression

- Decomposes image into a set of wavelets using discrete wavelet transform (DWT)

- Provides multi-resolution analysis of image data

- Allows for progressive transmission and decoding of images

- Offers better preservation of edge details compared to JPEG

- Used in JPEG 2000 standard for improved compression performance

Lossy compression principles

- Lossy compression techniques aim to reduce file sizes while maintaining perceptual quality

- These methods exploit redundancies and limitations in human visual perception

- Understanding these principles is essential for developing efficient image compression algorithms

Data reduction techniques

- Downsampling reduces image resolution by decreasing pixel count

- Chroma subsampling exploits lower sensitivity to color information

- Transform coding converts image data to frequency domain for efficient representation

- Run-length encoding compresses sequences of identical pixel values

- Delta encoding stores differences between adjacent pixel values

Perceptual coding strategies

- Exploit limitations of human visual system to discard imperceptible information

- Utilize psychovisual models to determine which data can be safely discarded

- Apply stronger compression to high-frequency components less noticeable to human eye

- Preserve low-frequency information crucial for overall image structure

- Adapt compression based on local image characteristics (texture, edges)

Quantization methods

- Reduce precision of pixel or coefficient values to decrease data size

- Scalar quantization applies uniform or non-uniform quantization to individual values

- Vector quantization groups similar pixel patterns into representative codewords

- Adaptive quantization adjusts quantization levels based on local image properties

- Perceptual quantization matrices optimize quantization for human visual sensitivity

Image quality vs file size

- Balancing image quality and file size is a crucial aspect of lossy compression

- Understanding the relationship between compression ratio and visual artifacts helps optimize compression settings

- Evaluating this trade-off is essential for various applications in digital imaging and data transmission

Compression ratio considerations

- Higher compression ratios result in smaller file sizes but potentially lower image quality

- Compression ratio calculated as original file size divided by compressed file size

- Typical JPEG compression ratios range from 10:1 to 20:1 for acceptable quality

- Extreme compression ratios (50:1 or higher) lead to severe quality degradation

- Optimal compression ratio depends on image content and intended use

Visual artifacts in compression

- Blocking artifacts appear as visible boundaries between 8x8 pixel blocks in JPEG

- Ringing artifacts manifest as halos around sharp edges due to quantization

- Blurring occurs due to loss of high-frequency details during compression

- Color banding becomes visible in gradients when color depth is reduced

- Mosquito noise appears as fluctuations in pixel values near high-contrast edges

Optimal compression settings

- Adjust quality factor in JPEG to balance file size and visual quality

- Consider image content (smooth areas vs. detailed textures) when selecting settings

- Use higher quality settings for images with text or fine details

- Employ lower quality settings for natural scenes with gradual color transitions

- Experiment with different settings to find optimal balance for specific use cases

Lossy compression algorithms

- Lossy compression algorithms form the core of many image and video compression techniques

- These algorithms exploit various mathematical transformations and coding strategies

- Understanding these algorithms is crucial for developing and optimizing compression systems

Discrete cosine transform

- Converts spatial domain image data into frequency domain representation

- Concentrates image energy into a few significant coefficients

- Allows efficient compression by discarding less important high-frequency components

- Used in JPEG compression for 8x8 pixel blocks

- DCT formula:

Vector quantization

- Divides image into small blocks (vectors) and maps them to a codebook

- Codebook contains representative vectors for common image patterns

- Achieves compression by storing codebook indices instead of actual pixel values

- Offers high compression ratios for images with repetitive patterns

- Requires careful codebook design to maintain image quality

Chroma subsampling

- Reduces color information while maintaining full luminance resolution

- Exploits human eye's lower sensitivity to color variations compared to brightness

- Common subsampling ratios include 4:2:2 and 4:2:0

- 4:2:2 subsampling reduces horizontal color resolution by half

- 4:2:0 subsampling reduces both horizontal and vertical color resolution by half

Applications of lossy compression

- Lossy compression techniques find widespread use in various digital imaging applications

- These methods enable efficient storage and transmission of visual data

- Understanding the applications helps in selecting appropriate compression strategies for different scenarios

Web graphics optimization

- Reduces image file sizes for faster webpage loading

- Balances visual quality with bandwidth considerations

- Employs techniques like JPEG compression for photographs

- Utilizes PNG format for images with transparency

- Implements responsive image techniques for different device resolutions

Digital photography storage

- Allows storage of more images on limited-capacity memory cards

- Enables efficient backup and archiving of large photo collections

- Utilizes JPEG compression for consumer-grade cameras

- Employs RAW formats for professional photography with post-processing flexibility

- Implements lossy compression in cloud storage services for optimized storage

Video streaming compression

- Enables real-time transmission of video content over limited bandwidth

- Utilizes inter-frame compression to exploit temporal redundancy

- Implements adaptive bitrate streaming for varying network conditions

- Employs codecs like H.264/AVC and H.265/HEVC for efficient compression

- Balances video quality with data usage for mobile streaming applications

Psychovisual optimization

- Psychovisual optimization techniques leverage the characteristics of human visual perception

- These methods aim to maximize perceived image quality while minimizing data size

- Understanding psychovisual principles is crucial for developing effective lossy compression algorithms

Human visual system limitations

- Exploits lower sensitivity to high-frequency spatial information

- Utilizes varying sensitivity to different color channels

- Leverages masking effects where strong signals hide weaker ones

- Considers contrast sensitivity function for different spatial frequencies

- Accounts for temporal masking in video compression

Color space considerations

- Converts RGB color space to YCbCr for more efficient compression

- Separates luminance (Y) from chrominance (Cb, Cr) components

- Allows for independent processing and compression of color channels

- Utilizes perceptually uniform color spaces like CIE Lab for better quality preservation

- Implements gamut mapping to handle color space conversions

Perceptual redundancy removal

- Eliminates imperceptible details based on human visual system models

- Applies stronger compression to high-frequency components less noticeable to the eye

- Utilizes just-noticeable difference (JND) thresholds for quantization

- Implements perceptual quantization matrices in transform-based compression

- Adapts compression strength based on local image characteristics (texture, edges)

Lossy vs lossless compression

- Comparing lossy and lossless compression techniques is essential for choosing appropriate methods

- Understanding the trade-offs helps in selecting the right approach for different applications

- Evaluating the strengths and limitations of each method informs compression strategy decisions

Trade-offs in image quality

- Lossy compression achieves higher compression ratios at the cost of some quality loss

- Lossless compression preserves exact original data but offers limited file size reduction

- Lossy methods introduce artifacts that may be visually imperceptible at lower compression levels

- Lossless compression maintains perfect fidelity for critical applications (medical imaging)

- Hybrid approaches combine lossy and lossless techniques for optimized compression

Compression efficiency comparison

- Lossy compression typically achieves 10:1 to 50:1 compression ratios for images

- Lossless compression usually limited to 2:1 to 3:1 compression ratios for natural images

- Lossy methods offer significantly smaller file sizes for similar perceptual quality

- Lossless compression more effective for synthetic images with large uniform areas

- Compression efficiency varies depending on image content and complexity

Use cases for each approach

- Lossy compression suitable for web graphics, digital photography, and video streaming

- Lossless compression essential for medical imaging, scientific data, and archival purposes

- Lossy methods preferred for consumer applications prioritizing storage efficiency

- Lossless compression crucial for professional workflows requiring multiple edits

- Hybrid approaches used in digital cameras offering both RAW and JPEG formats

Compression standards and formats

- Compression standards and formats provide guidelines for implementing and using compression techniques

- These standards ensure interoperability between different software and hardware systems

- Understanding various standards is crucial for developing compatible imaging applications

JPEG standard overview

- Developed by Joint Photographic Experts Group in 1992

- Widely used for compressing still images in digital photography and web graphics

- Employs discrete cosine transform (DCT) and Huffman coding

- Supports both lossy and lossless compression modes

- Offers adjustable quality settings for balancing compression and image fidelity

MPEG compression for video

- Developed by Moving Picture Experts Group for video compression

- Utilizes inter-frame compression to exploit temporal redundancy

- Implements motion estimation and compensation techniques

- Includes standards like MPEG-2, MPEG-4, and H.264/AVC

- Supports scalable video coding for adaptive streaming applications

WebP and next-gen formats

- WebP developed by Google as an alternative to JPEG and PNG

- Offers both lossy and lossless compression modes

- Provides better compression efficiency compared to JPEG at similar quality levels

- AVIF based on AV1 video codec, offering high compression ratios

- JPEG XL designed as a potential successor to JPEG with improved efficiency

Evaluating compression performance

- Evaluating compression performance is crucial for optimizing and comparing different compression techniques

- These evaluation methods help in assessing the trade-offs between compression ratio and image quality

- Understanding various metrics and assessment techniques is essential for developing effective compression algorithms

Objective quality metrics

- Peak Signal-to-Noise Ratio (PSNR) measures pixel-level differences between original and compressed images

- Structural Similarity Index (SSIM) evaluates structural information preservation

- Multi-Scale Structural Similarity Index (MS-SSIM) extends SSIM to multiple image scales

- Visual Information Fidelity (VIF) quantifies information shared between reference and distorted images

- PSNR-HVS incorporates human visual system characteristics into PSNR calculation

Subjective assessment methods

- Mean Opinion Score (MOS) involves human raters scoring image quality on a predefined scale

- Paired comparison tests present two images side-by-side for quality comparison

- Double-stimulus continuous quality scale (DSCQS) uses reference and test images for evaluation

- Single-stimulus continuous quality evaluation (SSCQE) assesses quality without reference images

- Just Noticeable Difference (JND) tests determine the threshold at which quality differences become perceptible

Compression benchmarking techniques

- Rate-distortion curves plot image quality against compression ratio or bit rate

- Bjøntegaard Delta (BD) rate measures coding efficiency differences between two compression methods

- Compression speed and computational complexity evaluations assess algorithm performance

- Cross-platform compatibility testing ensures consistent results across different systems

- Large-scale image datasets used for comprehensive compression algorithm evaluation

Challenges in lossy compression

- Lossy compression techniques face various challenges in maintaining image quality while achieving high compression ratios

- Addressing these challenges is crucial for developing more effective compression algorithms

- Understanding these issues helps in optimizing compression techniques for different applications

Edge preservation issues

- Sharp edges tend to blur or exhibit ringing artifacts during compression

- High-frequency components crucial for edge definition are often discarded

- Edge detection and adaptive compression techniques help preserve important edges

- Wavelet-based methods offer improved edge preservation compared to DCT-based compression

- Post-processing filters can enhance edges in decompressed images

Color banding problems

- Occurs when smooth color gradients are compressed, resulting in visible bands

- More pronounced in areas with subtle color transitions (skies, shadows)

- Dithering techniques can be applied to reduce the visibility of color banding

- Higher bit depth and careful quantization help minimize banding artifacts

- Perceptual quantization strategies prioritize preserving smooth color transitions

Compression artifacts mitigation

- Blocking artifacts in JPEG addressed through deblocking filters

- Adaptive quantization techniques reduce artifacts in complex image regions

- Super-resolution methods can improve the quality of heavily compressed images

- Machine learning approaches (convolutional neural networks) for artifact removal

- Hybrid compression schemes combine multiple techniques to minimize artifacts