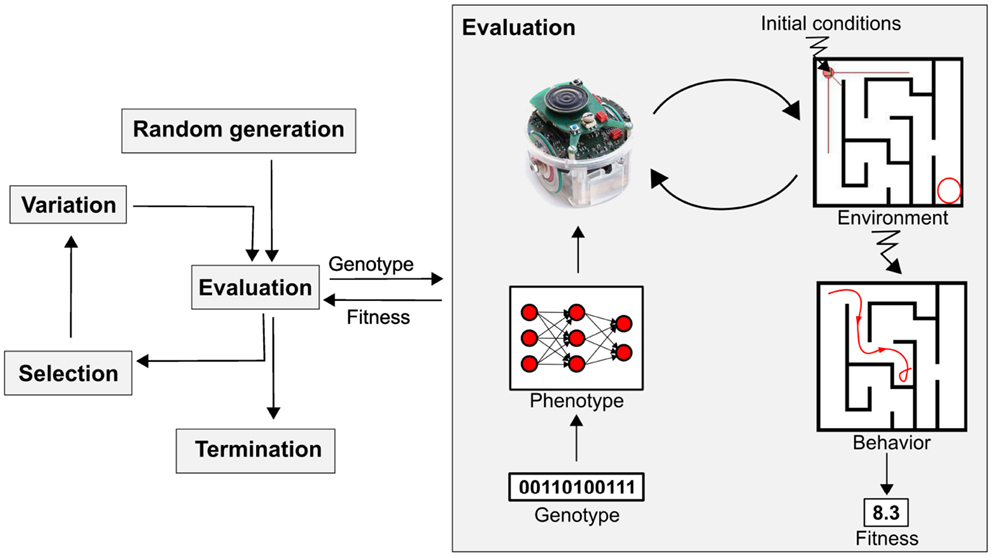

Evolutionary algorithms are nature-inspired optimization techniques with five key components: population initialization, fitness evaluation, selection, genetic operators, and termination criteria. These elements work together to simulate evolution, guiding the search for optimal solutions in complex problem spaces.

Understanding these components is crucial for grasping how evolutionary algorithms function. From encoding solutions as chromosomes to designing effective fitness functions, each aspect plays a vital role in the algorithm's ability to solve diverse problems across various domains.

Evolutionary Algorithm Components

Core Components and Their Functions

- Evolutionary algorithms consist of five main components

- Population initialization creates initial set of candidate solutions

- Generated randomly or using domain-specific knowledge

- Fitness evaluation assesses quality of each individual

- Based on predefined criteria or objectives

- Selection mechanisms choose parents for next generation

- Typically favor individuals with higher fitness

- Genetic operators create new individuals

- Crossover recombines existing solutions

- Mutation modifies solutions

- Termination criteria define when algorithm stops

- Examples include maximum generations or desired fitness level

- Population initialization creates initial set of candidate solutions

Population Initialization and Genetic Operators

- Population initialization generates diverse starting solutions

- Random generation ensures broad coverage of solution space

- Domain-specific heuristics can guide initial population (manufacturing processes, financial models)

- Genetic operators mimic biological evolution to create new solutions

- Crossover combines traits from two parent solutions (single-point, uniform)

- Mutation introduces small random changes to maintain diversity (bit-flip, Gaussian)

- Operator choice and probability affect exploration-exploitation balance

Individual Representation in Populations

Chromosome Encoding Methods

- Individuals represented as chromosomes encoding potential solutions

- Binary representation uses strings of 0s and 1s

- Each bit represents specific trait or parameter (gene sequences, digital circuit designs)

- Real-valued representation uses floating-point numbers

- Directly encodes continuous variables (optimization of physical parameters, neural network weights)

- Integer representation uses whole numbers

- Encodes discrete variables or categorical choices (scheduling problems, resource allocation)

- Permutation representation for ordering problems

- Chromosome represents specific sequence or arrangement (traveling salesman problem, job shop scheduling)

Advanced Representation Techniques

- Tree-based representation common in genetic programming

- Individuals represented as hierarchical structures of nodes and branches (mathematical expressions, decision trees)

- Graph-based representation for complex structures

- Encodes relationships between components (neural network architectures, molecule design)

- Mixed representations combine multiple encoding types

- Tailored for problems with diverse parameter types (robot controller design, game strategy optimization)

- Representation choice impacts algorithm performance and effectiveness

- Must be tailored to specific problem domain and constraints

Fitness Evaluation in Algorithms

Fitness Function Design and Implementation

- Fitness evaluation quantifies quality of each individual

- Fitness function assigns numerical value to individuals

- Reflects suitability as solution to problem

- Single-objective optimization uses scalar fitness value

- Directly represents solution quality (minimizing cost, maximizing efficiency)

- Multi-objective optimization evaluates multiple criteria

- Often results in set of Pareto-optimal solutions (balancing performance and energy consumption)

- Constraint handling techniques incorporated into evaluation

- Penalize or repair solutions violating problem constraints (structural integrity in engineering design)

- Effective fitness function crucial for algorithm success

- Guides search process towards optimal solutions

Advanced Evaluation Techniques

- Computationally expensive evaluations common in complex problems

- May require surrogate models or approximation techniques (computational fluid dynamics, finite element analysis)

- Interactive fitness evaluation involves human input

- Used in subjective domains (artistic design, user experience optimization)

- Co-evolutionary fitness evaluation

- Individuals evaluated against other evolving populations (game AI, competitive strategies)

- Dynamic fitness landscapes

- Evaluation criteria change over time (adaptive control systems, financial market prediction)

- Noise and uncertainty handling in fitness evaluation

- Techniques to deal with stochastic or imperfect evaluations (robust optimization, sampling methods)

Termination Criteria for Algorithms

Common Termination Conditions

- Maximum number of generations or evaluations

- Limits computational resources used by algorithm (fixed time budget scenarios)

- Achieving target fitness value or solution quality

- Stops when satisfactory solution found (reaching desired accuracy in optimization)

- Lack of improvement in best fitness over specified generations

- Indicates potential convergence (stagnation in search process)

- Diversity loss in population

- Measured by genetic similarity or fitness variance (premature convergence detection)

- Time-based criteria limit algorithm runtime

- Useful in real-time or resource-constrained applications (online learning systems)

Advanced Termination Strategies

- Hybrid termination criteria combine multiple conditions

- Balances solution quality and computational efficiency (combining generation limit with improvement threshold)

- Adaptive termination criteria adjust during runtime

- Based on algorithm progress or problem characteristics (dynamic allocation of computational budget)

- Statistical termination tests

- Use statistical measures to determine convergence (confidence intervals on solution quality)

- Multi-objective termination criteria

- Consider trade-offs between different objectives (Pareto front stability in multi-objective optimization)

- Ensemble-based termination

- Aggregate decisions from multiple termination criteria (voting mechanism among different stopping conditions)