Spanning Trees

Fundamental Concepts of Spanning Trees

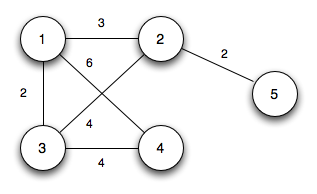

A spanning tree of a connected graph is a subgraph that reaches every vertex while containing no cycles. Think of it as stripping a graph down to the bare minimum connections needed so that every vertex is still reachable from every other vertex.

- A spanning tree of a graph with vertices always has exactly edges. Any fewer and some vertex would be disconnected; any more and you'd create a cycle.

- It's acyclic by definition. If you ever add one more edge from the original graph to a spanning tree, you'll form exactly one cycle.

- A single graph can have many different spanning trees. A complete graph on 4 vertices, for example, has 16 distinct spanning trees.

In a weighted graph, each edge carries a numerical value (its edge weight) representing a cost, distance, capacity, or similar quantity. Spanning trees become especially interesting in weighted graphs because you can ask: which spanning tree has the smallest total weight?

Properties and Theorems of Spanning Trees

Two properties underpin every MST algorithm. Understanding them makes the algorithms feel logical rather than arbitrary.

Cut Property. Take any cut of the graph (a partition of the vertices into two non-empty groups). The lightest edge crossing that cut must belong to every MST (assuming that lightest edge is unique). This tells you which edges to include.

Cycle Property. Look at any cycle in the graph. The heaviest edge in that cycle cannot appear in any MST (again, assuming it's uniquely the heaviest). This tells you which edges to exclude.

Both properties assume distinct edge weights for the "must/cannot" guarantees. When weights tie, there can be multiple valid MSTs, and the properties still guide correct choices even if they no longer force a unique one.

Minimum Spanning Trees (MSTs)

Characteristics and Applications of MSTs

A minimum spanning tree is a spanning tree whose total edge weight is as small as possible. It connects all vertices of a weighted, undirected graph at the lowest total cost.

- If all edge weights are distinct, the MST is unique. If some weights repeat, there may be several MSTs with the same total weight.

- MSTs show up in practical problems: designing cable or pipeline networks at minimum cost, clustering data points (by removing the heaviest edges from an MST), and building approximation algorithms for NP-hard problems like the Traveling Salesman Problem.

Greedy Approach in MST Construction

Both major MST algorithms are greedy: they build the solution one edge at a time, always picking the locally best option, and never undoing a previous choice.

Why does greedy work here when it fails for so many other optimization problems? Because the cut and cycle properties guarantee that each locally optimal edge choice is also globally safe. At every step, the lightest edge that doesn't violate the spanning tree structure is provably part of some MST.

The greedy approach starts with no edges selected and adds them one by one. It runs in polynomial time, which makes it practical even for large graphs.

MST Algorithms

Kruskal's Algorithm

Kruskal's algorithm takes a global view: sort every edge by weight, then greedily add edges from lightest to heaviest, skipping any edge that would create a cycle.

Steps:

-

Sort all edges in non-decreasing order of weight.

-

Initialize a disjoint-set (union-find) data structure where each vertex is its own set.

-

Walk through the sorted edge list. For each edge :

- If and are in different sets, add the edge to the MST and merge (union) their sets.

- If and are already in the same set, skip the edge (it would create a cycle).

-

Stop once you've added edges.

Why the union-find structure matters: Checking whether two vertices are in the same set (and merging sets) can be done in nearly constant time with path compression and union by rank. Without it, cycle detection would be much slower.

Time complexity: , dominated by the sorting step. Since , this is equivalently . Kruskal's tends to perform best on sparse graphs where is much smaller than .

Prim's Algorithm

Prim's algorithm takes a local view: start from one vertex and grow the tree outward, always attaching the cheapest edge that connects a vertex already in the tree to one that isn't.

Steps:

-

Pick any starting vertex and add it to the MST.

-

Insert all edges adjacent to that vertex into a priority queue (min-heap), keyed by weight.

-

While the MST has fewer than edges:

- Extract the minimum-weight edge from the priority queue.

- If it connects to a vertex not yet in the MST, add the edge and that vertex to the tree. Then insert all edges from the newly added vertex into the priority queue.

- If both endpoints are already in the MST, discard the edge.

-

The MST is complete when it contains edges.

Time complexity: with a binary heap. Using a Fibonacci heap improves this to , which is better for dense graphs. Prim's tends to outperform Kruskal's on dense graphs where approaches .

Kruskal's vs. Prim's at a glance: Kruskal's sorts all edges globally and uses union-find; Prim's grows from a single vertex using a priority queue. Both produce a valid MST. Choose Kruskal's for sparse graphs, Prim's for dense ones.