Calculus of variations provides the mathematical foundation for optimizing functionals, which are mappings from function spaces to real numbers. In control theory, these tools are essential for finding optimal trajectories and control strategies. This guide covers the core concepts, the Euler-Lagrange equation, constrained problems, Hamilton's principle, direct methods, and applications to optimal control.

Fundamental concepts of calculus of variations

Calculus of variations deals with finding extrema (maxima or minima) of functionals rather than ordinary functions. While regular calculus optimizes functions of numbers, calculus of variations optimizes over entire function spaces. This distinction is what makes it so useful in control theory, where you're searching for the best function (a trajectory or control input over time), not just the best number.

Functionals and function spaces

A functional assigns a real number to each function in a given function space. Think of it as a "function of functions." For example, the arc length of a curve between two points is a functional: it takes in a function and outputs a single number.

Function spaces are sets of functions sharing certain properties:

- : continuous functions on

- : square-integrable functions on

- : continuously differentiable functions on

The choice of function space depends on the problem. If your solution needs to be smooth, you work in . If you only need finite energy, may suffice.

Weak and strong variations

Variations are perturbations applied to a candidate function to analyze whether it's truly an extremum.

- Weak variations are infinitesimal perturbations that satisfy the boundary conditions. They're "weak" because both the function and its derivatives change by small amounts. These are used to derive necessary conditions like the Euler-Lagrange equation.

- Strong variations allow finite changes to the function and may produce large changes in derivatives, even if the function change is small. These are used to establish sufficient conditions for extrema.

The distinction matters because a function can satisfy the necessary conditions from weak variations without being a true extremum under strong variations.

Necessary and sufficient conditions for extrema

- Necessary conditions must hold at any extremum but don't guarantee one exists. The Euler-Lagrange equation is the primary necessary condition.

- Sufficient conditions confirm that a candidate function actually is an extremum.

The key sufficient conditions are:

- Legendre condition: For a minimum, along the extremal. For a maximum, this quantity must be non-positive.

- Jacobi condition: Ensures no conjugate points exist in the interval, which rules out saddle points and confirms the extremum is genuine.

Together, the Euler-Lagrange equation, Legendre condition, and Jacobi condition form the classical toolkit for verifying extrema.

Euler-Lagrange equation

The Euler-Lagrange equation is the central result in calculus of variations. It provides a necessary condition that any extremizing function must satisfy, converting a variational problem into a differential equation.

Derivation of Euler-Lagrange equation

Consider a functional of the form:

where is the unknown function and is a known integrand depending on , , and .

The derivation proceeds in these steps:

- Suppose is the extremizing function. Introduce a perturbed function , where is an arbitrary smooth function satisfying (so the endpoints stay fixed), and is a small parameter.

- Substitute into to get , which is now an ordinary function of .

- Set (the first variation must vanish).

- Apply integration by parts to eliminate the term.

- Since is arbitrary, the integrand itself must vanish, yielding:

This is the Euler-Lagrange equation. Any function that extremizes must satisfy it.

First and second order conditions

The Euler-Lagrange equation is a first-order necessary condition (first-order in the sense of the variational calculus, though the resulting ODE may be second-order in ).

To determine whether a solution is a minimum, maximum, or saddle point, you need second-order conditions:

- Legendre condition: Check the sign of . For a minimum, (strengthened: ). For a maximum, .

- Jacobi condition: Analyze the Jacobi equation (a linear ODE derived from the second variation) and verify that no conjugate points exist in the open interval .

A solution satisfying the Euler-Lagrange equation, the strengthened Legendre condition, and the Jacobi condition is a local minimum (or maximum, depending on sign).

Generalizations and extensions

The basic Euler-Lagrange equation extends to several more complex settings:

- Higher-order derivatives: If depends on , the equation becomes:

- Multiple functions: If the functional depends on , you get a separate Euler-Lagrange equation for each .

- Multiple independent variables: For functionals like , the Euler-Lagrange equation becomes a PDE.

Variational problems with constraints

Most real-world optimization problems involve constraints. In control theory, you might need to minimize fuel usage while reaching a target state, or optimize a trajectory subject to physical limitations.

Holonomic and non-holonomic constraints

- Holonomic constraints involve only the functions and independent variables: . These are algebraic relationships that restrict which configurations are allowed.

- Non-holonomic constraints also involve derivatives: . These restrict velocities or rates of change and cannot generally be integrated into purely algebraic form.

Holonomic constraints are handled straightforwardly with Lagrange multipliers. Non-holonomic constraints require more advanced techniques such as the Lagrange-d'Alembert principle.

Lagrange multipliers and constrained optimization

The method of Lagrange multipliers converts a constrained problem into an unconstrained one by introducing auxiliary variables.

- Start with the functional to optimize, , subject to a constraint .

- Introduce a Lagrange multiplier and form the augmented integrand: .

- Apply the Euler-Lagrange equation to as if it were unconstrained.

- Solve the resulting system of equations (Euler-Lagrange equations plus the constraint equation) for and .

The multiplier has a physical interpretation: it represents the sensitivity of the optimal cost to changes in the constraint.

Isoperimetric problems and applications

Isoperimetric problems are constrained variational problems where the constraint is an integral condition:

The classic example: find the closed curve of fixed perimeter that encloses the maximum area. The answer is a circle.

To solve these problems:

- Introduce a Lagrange multiplier and form the modified functional:

- Apply the Euler-Lagrange equation to the combined integrand .

- Use the integral constraint to determine .

In control theory, isoperimetric problems arise when optimizing performance subject to resource limits (e.g., total fuel, total time, or total energy expenditure).

Hamilton's principle and least action

Hamilton's principle provides a variational foundation for all of classical mechanics. Rather than writing force-balance equations directly, you define a single scalar quantity (the action) and require it to be stationary. The equations of motion then follow automatically.

Formulation of Hamilton's principle

The action integral is:

where is the Lagrangian, represents generalized coordinates, and represents generalized velocities.

Hamilton's principle states: the actual path taken by the system makes the action stationary among all paths with the same endpoints. Mathematically, for all variations that vanish at and .

Applying the calculus of variations to this condition yields the Euler-Lagrange equations for the system, which are equivalent to Newton's equations of motion.

Principle of least action in mechanics

In mechanics, the Lagrangian is defined as:

where is kinetic energy and is potential energy. Hamilton's principle then says the system evolves to make stationary.

This variational formulation is often more convenient than Newton's laws for complex systems because:

- It works naturally with generalized coordinates (no need to resolve forces along coordinate axes).

- Constraints can be incorporated directly through the choice of coordinates or via Lagrange multipliers.

- It generalizes readily to field theories and relativistic mechanics.

Note: the name "least action" is slightly misleading. The action is stationary (first variation is zero), but it's not always a minimum. In most practical mechanics problems, however, it does turn out to be a minimum.

Conservation laws and Noether's theorem

Noether's theorem establishes a deep connection between symmetries and conservation laws: every continuous symmetry of the action integral corresponds to a conserved quantity.

| Symmetry | Conserved Quantity |

|---|---|

| Time translation ( doesn't depend on ) | Energy |

| Spatial translation ( doesn't depend on position) | Linear momentum |

| Rotational symmetry ( doesn't depend on angle) | Angular momentum |

This theorem is one of the most powerful results in theoretical physics. In control theory, identifying symmetries can simplify optimal control problems by reducing the number of independent variables.

Direct methods in calculus of variations

Direct methods bypass the Euler-Lagrange equation entirely. Instead of solving a differential equation, they approximate the solution within a finite-dimensional subspace and minimize the functional directly. This converts the infinite-dimensional variational problem into a finite-dimensional optimization problem that can be solved numerically.

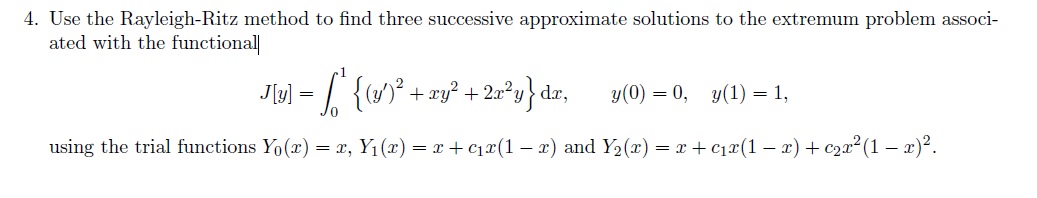

Ritz and Galerkin methods

Ritz method:

- Choose a set of basis functions that satisfy the boundary conditions.

- Approximate the solution as .

- Substitute into the functional to get , an ordinary function of the coefficients.

- Minimize with respect to by setting for each .

- Solve the resulting system of algebraic equations for the coefficients.

Galerkin method: Similar setup, but instead of minimizing the functional, you require the residual of the Euler-Lagrange equation to be orthogonal to each basis function:

where is the residual. For self-adjoint problems, the Ritz and Galerkin methods produce identical results.

Finite element approximations

The finite element method (FEM) is a specific implementation of direct methods that divides the domain into small subdomains (elements) and uses piecewise polynomial basis functions.

- Discretize the domain into elements (e.g., subintervals).

- Define local basis functions (typically piecewise linear, quadratic, or cubic polynomials) on each element.

- Assemble the global system by combining contributions from all elements.

- Solve the resulting algebraic system.

FEM is particularly well-suited for problems with complex geometries and non-uniform material properties. Its mathematical foundation rests on Sobolev spaces and weak formulations of the variational problem.

Convergence and error analysis

The quality of direct method approximations depends on the basis functions chosen and the refinement level.

- Convergence: As the number of basis functions (or elements) increases, the approximate solution should approach the exact solution in a suitable norm. Proving this rigorously requires tools from functional analysis.

- A priori error estimates bound the error before computing the solution, typically in terms of the element size and polynomial degree . For example, FEM with piecewise linear elements often gives convergence in the norm.

- A posteriori error estimates use the computed solution to assess accuracy and guide adaptive mesh refinement, concentrating elements where the error is largest.

Applications in control theory

Calculus of variations provides the theoretical backbone for optimal control. The goal is to find control inputs that minimize a cost functional while the system obeys its dynamics.

Optimal control problems and formulations

A standard optimal control problem has three components:

- System dynamics: , where is the state vector and is the control input.

- Cost functional: , where is the running cost and is the terminal cost.

- Constraints: Boundary conditions, state constraints, or control limits.

The objective is to find the optimal control that minimizes subject to the dynamics and constraints. This is where the calculus of variations connects directly to control engineering.

Pontryagin's maximum principle

Pontryagin's maximum principle extends the Euler-Lagrange framework to optimal control problems with control constraints. It introduces the Hamiltonian:

where is the vector of costate (adjoint) variables.

The necessary conditions are:

- State equation:

- Costate equation:

- Optimality condition: The optimal control maximizes at each time :

- Boundary conditions: Specified at for the state and at for the costate (transversality conditions).

Solving these conditions typically leads to a two-point boundary value problem (state conditions at , costate conditions at ), which is one of the main computational challenges.

Dynamic programming and Hamilton-Jacobi-Bellman equation

Dynamic programming takes a different approach based on Bellman's principle of optimality: an optimal policy has the property that, regardless of the initial state and decision, the remaining decisions must be optimal with respect to the resulting state.

This leads to the Hamilton-Jacobi-Bellman (HJB) equation for the optimal cost-to-go function :

with boundary condition .

Once is found, the optimal control is:

A key advantage of dynamic programming over Pontryagin's principle is that it yields a feedback (closed-loop) control law rather than an open-loop trajectory. The main disadvantage is the "curse of dimensionality": the HJB equation is a PDE in the state space, which becomes computationally intractable for high-dimensional systems.

Numerical methods for variational problems

Analytical solutions to variational problems are rare in practice. Numerical methods are essential for solving the differential equations and optimization problems that arise from the calculus of variations.

Discretization techniques and algorithms

Discretization converts the continuous problem into a finite-dimensional one:

- Finite difference methods approximate derivatives with difference quotients (e.g., ).

- Finite element methods approximate the solution with piecewise polynomials over a mesh.

- Collocation methods require the differential equation to be satisfied exactly at selected points.

The resulting algebraic optimization problem can be solved with gradient-based methods (steepest descent, conjugate gradient), Newton's method, or interior-point methods. The choice depends on problem size, structure, and required accuracy.

Shooting methods and boundary value problems

Optimal control problems often produce two-point boundary value problems (from Pontryagin's principle). Shooting methods convert these into initial value problems:

- Single shooting: Guess the unknown initial values (e.g., the initial costate ), integrate forward to , and check whether the boundary conditions at are satisfied. Adjust the guess and repeat.

- Multiple shooting: Divide into subintervals, guess initial values on each subinterval, integrate each segment independently, and enforce continuity between segments.

Multiple shooting is more robust than single shooting for stiff or sensitive problems because errors don't propagate across the entire time interval. Both approaches use root-finding algorithms (e.g., Newton's method) to iteratively correct the guesses.

Computational challenges and solutions

Several challenges arise in practice:

- High dimensionality: Large state or control spaces lead to many variables. Sparse matrix techniques and problem decomposition can help.

- Stiffness: When the system has widely separated time scales, standard integrators require very small step sizes. Implicit integration methods (e.g., backward differentiation formulas) handle stiffness more efficiently.

- Ill-conditioning: Small changes in the data can cause large changes in the solution. Regularization techniques and careful scaling of variables improve numerical stability.

- Curse of dimensionality: For dynamic programming, the computational cost grows exponentially with state dimension. Approximate dynamic programming and reinforcement learning methods offer partial remedies for high-dimensional problems.