Containerization and orchestration are game-changers in cloud computing. They enable efficient, portable, and scalable application deployment. Docker simplifies container creation and management, while Kubernetes automates deployment, scaling, and operations of containerized apps.

These technologies revolutionize how we build and run applications in the cloud. They offer consistency across environments, improved resource utilization, and easier management of complex, distributed systems. Understanding containerization and orchestration is crucial for modern cloud architecture.

Containers vs virtual machines

- Containers are lightweight, isolated environments that package an application and its dependencies, while virtual machines (VMs) emulate an entire operating system and hardware stack

- Containers share the host OS kernel and resources, resulting in lower overhead and faster startup times compared to VMs (seconds vs minutes)

- Multiple containers can run on a single host, allowing for higher density and resource utilization, while VMs require dedicated resources and have larger footprints

Docker containers

Docker architecture

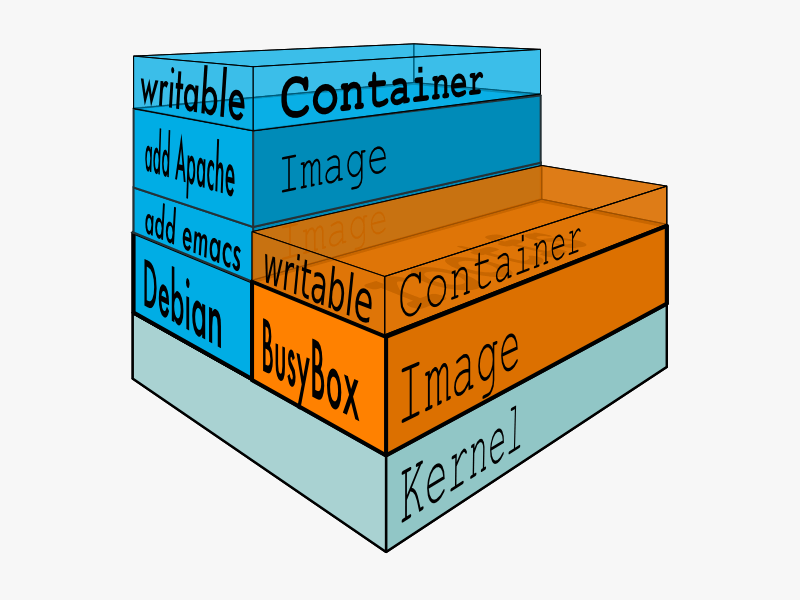

- Docker follows a client-server architecture, with the Docker daemon running on the host and accepting commands from the Docker client

- Images are read-only templates used to create containers, and are stored in registries (Docker Hub, private registries)

- Containers are running instances of images, with their own isolated filesystem, networking, and process space

- Docker uses a layered filesystem (Union FS) to efficiently store and share image layers across containers

Dockerfile syntax

- Dockerfiles are text files that define the steps to build a Docker image, using a declarative syntax

- Key instructions include

FROM(base image),RUN(execute commands),COPY/ADD(add files),ENV(set environment variables),EXPOSE(container listening ports),CMD/ENTRYPOINT(default command to run) - Each instruction creates a new layer in the image, allowing for efficient rebuilds and sharing of common layers

Docker image registries

- Docker registries are servers that store and distribute Docker images, allowing for easy sharing and deployment

- Docker Hub is the default public registry, hosting a large collection of pre-built images (official and community-contributed)

- Private registries (self-hosted or cloud-based) provide controlled access and distribution of proprietary images within an organization

Docker networking modes

- Bridge (default): Containers are connected to a virtual bridge network on the host, with their own private IP addresses (172.17.0.0/16 subnet)

- Host: Containers share the host's network stack, with no isolation between container and host ports

- None: Containers have no external network connectivity, only a loopback interface

- Overlay: Enables communication between containers across multiple Docker hosts in a swarm, using VXLAN encapsulation

Container orchestration

Kubernetes architecture

- Kubernetes follows a master-worker architecture, with a control plane (master) managing multiple worker nodes (minions)

- The control plane includes the API server (REST API), etcd (distributed key-value store), scheduler (assigns pods to nodes), and controller manager (manages controllers)

- Worker nodes run the kubelet (node agent), kube-proxy (network proxy), and container runtime (Docker, containerd)

Kubernetes control plane components

- API server: Exposes the Kubernetes API, allowing users and components to interact with the cluster

- etcd: Stores the cluster state and configuration data, providing a consistent and reliable data store

- Scheduler: Assigns pods to nodes based on resource requirements, constraints, and policies

- Controller manager: Runs controllers that monitor and maintain the desired state of the cluster (replication, endpoints, service accounts)

Kubernetes worker node components

- Kubelet: The primary node agent, responsible for communicating with the API server, managing pods and containers, and reporting node status

- Kube-proxy: Maintains network rules and performs connection forwarding, enabling communication between pods and services

- Container runtime: The software responsible for running containers (Docker, containerd, CRI-O)

Kubernetes objects

- Pods: The smallest deployable unit in Kubernetes, consisting of one or more containers that share network and storage resources

- Services: Provide stable network endpoints for accessing pods, abstracting away the underlying pod IP addresses

- Deployments: Manage the desired state of replica sets and pods, enabling rolling updates and rollbacks

- StatefulSets: Manage stateful applications, ensuring stable network identities and persistent storage for pods

- ConfigMaps and Secrets: Store configuration data and sensitive information (credentials, keys) separately from pod definitions

Kubernetes services

- ClusterIP (default): Exposes the service on an internal cluster IP, accessible only within the cluster

- NodePort: Exposes the service on each node's IP at a static port, allowing external access to the service

- LoadBalancer: Provisions an external load balancer (cloud provider-specific) to distribute traffic to the service

- ExternalName: Maps the service to an external DNS name, acting as an alias for an external service

Kubernetes deployments

- Deployments provide declarative updates for pods and replica sets, allowing for rolling updates and rollbacks

- The deployment controller monitors the desired state and current state, making adjustments to maintain the desired number of replicas

- Rolling updates allow for zero-downtime deployments, gradually replacing old pods with new ones (configurable max unavailable and max surge)

- Rollbacks revert a deployment to a previous revision, useful for quickly recovering from faulty updates

Kubernetes stateful sets

- StatefulSets manage stateful applications that require stable network identities and persistent storage

- Each pod in a StatefulSet has a unique ordinal index and a stable hostname (pod-name.service-name.namespace.svc.cluster.local)

- Pods are created and scaled in a predictable order (0, 1, 2, ...), with each pod waiting for the previous one to be ready before starting

- Persistent volumes are automatically provisioned and attached to each pod, ensuring data persistence across pod restarts and rescheduling

Kubernetes config maps and secrets

- ConfigMaps store configuration data as key-value pairs, allowing for the separation of configuration from pod definitions

- Secrets store sensitive information (credentials, tokens, keys) in base64-encoded format, with optional encryption at rest

- ConfigMaps and Secrets can be mounted as files in a pod's filesystem or exposed as environment variables, providing a secure and flexible way to inject configuration

Kubernetes persistent volumes

- Persistent Volumes (PVs) are storage resources provisioned by administrators or dynamically provisioned using Storage Classes

- Persistent Volume Claims (PVCs) are requests for storage by users, specifying the required size, access mode, and storage class

- Kubernetes binds PVCs to PVs based on the requested specifications, allowing pods to access persistent storage

- Access modes include ReadWriteOnce (RWO), ReadOnlyMany (ROX), and ReadWriteMany (RWX), determining how the volume can be mounted and accessed

Kubernetes namespaces

- Namespaces provide a way to divide cluster resources and create virtual clusters within a physical cluster

- Resources (pods, services, deployments) within a namespace are isolated from other namespaces, allowing for multi-tenancy and resource sharing

- The

defaultnamespace is used when no namespace is specified, while thekube-systemnamespace is reserved for Kubernetes system components - Resource quotas and limits can be applied at the namespace level, controlling the total resource consumption within a namespace

Kubernetes resource limits

- Resource requests specify the minimum amount of CPU and memory required by a container, used for scheduling decisions

- Resource limits specify the maximum amount of CPU and memory a container can consume, preventing resource starvation and overload

- The Kubernetes scheduler ensures that the total resource requests of all pods on a node do not exceed the node's capacity

- If a container exceeds its resource limits, it may be throttled (CPU) or terminated and restarted (memory)

Kubernetes autoscaling

- Horizontal Pod Autoscaler (HPA) automatically adjusts the number of replicas in a deployment based on observed CPU utilization or custom metrics

- Cluster Autoscaler automatically adjusts the size of the cluster (adding or removing nodes) based on the resource demands of the pods

- Vertical Pod Autoscaler (VPA) automatically adjusts the resource requests and limits of containers based on historical usage data

- Autoscaling allows for efficient resource utilization and cost optimization, ensuring that the cluster can handle varying workloads

Containerization benefits

Portability and consistency

- Containers package an application and its dependencies into a single, portable unit that can run consistently across different environments (dev, test, prod)

- Container images are version-controlled and immutable, ensuring that the same code and configuration are used throughout the development and deployment lifecycle

- Containers eliminate the "it works on my machine" problem by providing a consistent runtime environment, regardless of the underlying infrastructure

Efficiency and resource utilization

- Containers are lightweight and have minimal overhead, allowing for higher density and better resource utilization compared to VMs

- Containers share the host OS kernel and resources, enabling faster startup times (seconds vs minutes for VMs) and lower memory footprint

- Containers can be easily packed onto a single host, maximizing the use of available compute resources and reducing infrastructure costs

Scalability and elasticity

- Containers can be quickly scaled up or down based on demand, allowing for elastic and responsive application architectures

- Container orchestration platforms (Kubernetes) enable automated scaling, self-healing, and load balancing of containerized applications

- Containers' lightweight nature and fast startup times make them well-suited for microservices architectures and serverless computing

Isolation and security

- Containers provide process-level isolation, ensuring that each container runs in its own isolated environment with its own filesystem, network, and process space

- Container isolation helps prevent security breaches and resource contention between applications running on the same host

- Security features like Linux namespaces, cgroups, and SELinux/AppArmor profiles enforce strict boundaries and limit the impact of container breakouts

Container networking

Container-to-container communication

- Containers within the same host can communicate using the host's virtual bridge network (docker0), with each container having its own private IP address

- Docker's built-in DNS server allows containers to resolve each other's hostnames, enabling service discovery within the host

- Containers can also communicate using user-defined bridge networks, providing better isolation and control over the network configuration

Container-to-host communication

- Containers can access services running on the host by using the host's IP address and the exposed port numbers

- Host-to-container communication is enabled by mapping container ports to host ports using the

-por-Pflags indocker run - Network traffic between the host and containers is managed by the host's network stack and iptables rules

Container-to-external communication

- Containers can access external services using the host's network stack and DNS resolution

- Inbound traffic to containers is enabled by mapping container ports to host ports and configuring the host's firewall and routing rules

- Load balancers (software or hardware) can distribute incoming traffic across multiple container instances, providing high availability and scalability

Container storage

Ephemeral storage

- By default, containers use an ephemeral storage driver (overlay2, aufs) that stores data in the host's filesystem

- Ephemeral storage is tied to the lifecycle of the container, and data is lost when the container is removed or recreated

- Ephemeral storage is suitable for temporary data, caches, and stateless applications that don't require data persistence

Persistent storage

- Persistent storage allows containers to store data that survives container restarts and removals

- Docker provides volume drivers (local, NFS, iSCSI, cloud storage) that enable containers to mount external storage as a directory in the container's filesystem

- Kubernetes uses Persistent Volumes (PVs) and Persistent Volume Claims (PVCs) to provide persistent storage to pods, with support for various storage backends (local, NFS, cloud storage)

Storage orchestration

- Container orchestration platforms (Kubernetes) provide storage orchestration features that automate the management of persistent storage

- Storage Classes define the types of storage available in the cluster (fast SSD, slow HDD) and the provisioning parameters (reclaim policy, mount options)

- Dynamic provisioning allows for the automatic creation of Persistent Volumes based on the requested Storage Class and size

- StatefulSets use Persistent Volume Claims to provide stable storage to stateful applications, ensuring that each pod has its own unique storage

Container monitoring and logging

Container metrics

- Container metrics provide insights into the resource usage and performance of containerized applications

- Key metrics include CPU usage, memory usage, network I/O, and disk I/O, collected at the container and host level

- Monitoring tools (Prometheus, Datadog, Sysdig) scrape metrics from the container runtime (Docker, containerd) and expose them for analysis and alerting

Container log aggregation

- Container logs capture the stdout and stderr output of containerized applications, providing valuable debugging and troubleshooting information

- Log aggregation tools (Fluentd, Logstash, Splunk) collect logs from multiple containers and hosts, centralize them for storage and analysis

- Kubernetes provides built-in log aggregation by exposing container logs through the API server and allowing for integration with external logging solutions

Monitoring tools

- Prometheus is a popular open-source monitoring solution that collects metrics from containers and hosts, stores them in a time-series database, and provides a powerful query language and alerting capabilities

- Grafana is often used in conjunction with Prometheus to create rich dashboards and visualizations of container metrics

- Commercial monitoring solutions (Datadog, New Relic, Dynatrace) offer container monitoring as part of their cloud-native observability platforms, with advanced features like AI-powered anomaly detection and distributed tracing

Container security

Container isolation

- Containers provide process-level isolation using Linux namespaces, which create separate filesystem, network, and process spaces for each container

- Linux cgroups (control groups) limit and account for the resource usage of containers, preventing noisy neighbor issues and resource contention

- Secure computing mode (seccomp) filters restrict the system calls available to containers, reducing the attack surface and mitigating the impact of container breakouts

Image vulnerability scanning

- Container images may contain software vulnerabilities that can be exploited by attackers, making image scanning an essential part of the container security workflow

- Image scanning tools (Clair, Anchore, Trivy) analyze the packages and libraries in an image and compare them against vulnerability databases (CVE, NVD)

- Image scanning can be integrated into the CI/CD pipeline, ensuring that only secure and compliant images are deployed to production

Runtime security monitoring

- Runtime security monitoring involves observing the behavior of running containers and detecting suspicious or malicious activities

- Security tools (Falco, Sysdig Secure, Aqua Security) use kernel-level system call monitoring and predefined rules to identify anomalous container behavior (unauthorized file access, network connections, process execution)

- Runtime security monitoring can help detect and respond to container breakouts, privilege escalations, and other security incidents in real-time

Security best practices

- Use minimal and trusted base images (Alpine, distroless) to reduce the attack surface and minimize the risk of vulnerabilities

- Avoid running containers as root and use user namespaces to map container users to non-privileged host users

- Regularly update and patch container images to address known vulnerabilities and security issues

- Use network policies (Kubernetes NetworkPolicy) to restrict ingress and egress traffic between containers and limit the blast radius of security incidents

- Implement role-based access control (RBAC) and least privilege principles to control access to container management and orchestration APIs

Containerization in cloud computing

Containers as a service (CaaS)

- CaaS platforms provide managed container orchestration and runtime services, abstracting away the complexity of deploying and managing containerized applications

- Examples of CaaS platforms include Amazon ECS, Azure Container Instances, Google Cloud Run, and DigitalOcean Kubernetes

- CaaS platforms typically offer integrations with other cloud services (load balancers, storage, monitoring) and support for hybrid and multi-cloud deployments

Serverless containers

- Serverless containers extend the serverless computing model to containerized applications, allowing developers to run containers without managing the underlying infrastructure

- Serverless container platforms (AWS Fargate, Azure Container Instances, Google Cloud Run) automatically provision and scale the required compute resources based on the incoming requests

- Serverless containers are well-suited for event-driven and microservices architectures, providing a cost-effective and scalable way to run containerized workloads

Hybrid and multi-cloud containerization

- Hybrid cloud containerization involves running containerized applications across on-premises and cloud environments, enabling workload portability and flexibility

- Multi-cloud containerization involves running containerized applications across multiple cloud providers, avoiding vendor lock-in and leveraging the best services from each provider

- Container orchestration platforms (Kubernetes) provide a consistent and standardized way to deploy and manage containerized applications across hybrid and multi-cloud environments

- Cloud-agnostic tools and platforms (Terraform, Helm, Istio) facilitate the deployment and management of containerized applications in hybrid and multi-cloud scenarios