Probability axioms form the foundation of Bayesian statistics, providing a framework for quantifying uncertainty. These fundamental rules ensure logical consistency in probability calculations and are essential for developing valid probabilistic models in Bayesian analysis.

The axioms of non-negativity, unity, and additivity establish the basic properties of probability. Understanding these axioms is crucial for grasping Bayesian inference methods, interpreting results, and applying probability theory to real-world problems in a Bayesian context.

Foundations of probability

- Probability theory forms the backbone of Bayesian statistics, providing a mathematical framework for quantifying uncertainty

- In Bayesian analysis, probability represents a degree of belief about events or hypotheses, which can be updated as new evidence becomes available

- Understanding probability foundations is crucial for grasping Bayesian inference methods and interpreting results in a Bayesian context

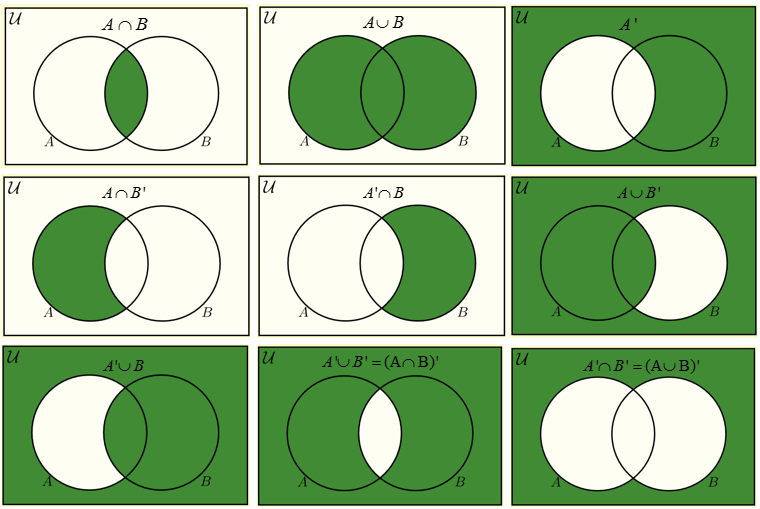

Set theory basics

- Sets represent collections of distinct objects or elements

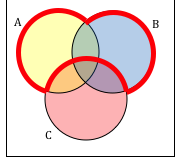

- Set operations include union (∪), intersection (∩), and complement (A^c)

- Venn diagrams visually represent relationships between sets

- Power set contains all possible subsets of a given set

- Empty set (∅) contains no elements and is a subset of all sets

Sample space definition

- Sample space (Ω) encompasses all possible outcomes of a random experiment

- Can be finite (coin toss), countably infinite (number of coin tosses until heads), or uncountably infinite (time until a radioactive particle decays)

- Proper definition of sample space crucial for accurate probability calculations

- Elements of sample space must be mutually exclusive and collectively exhaustive

- Sample space for rolling a die: Ω = {1, 2, 3, 4, 5, 6}

Events and outcomes

- Outcomes are individual elements of the sample space

- Events are subsets of the sample space, representing collections of outcomes

- Simple events contain a single outcome, while compound events contain multiple outcomes

- Complement of an event A (A^c) includes all outcomes not in A

- Events can be combined using set operations (union, intersection) to form new events

Probability axioms

- Probability axioms provide a formal mathematical foundation for probability theory

- These axioms, proposed by Kolmogorov, ensure consistency and logical coherence in probability calculations

- Understanding these axioms is essential for developing valid probabilistic models in Bayesian statistics

Non-negativity axiom

- States that the probability of any event must be non-negative

- Mathematically expressed as for any event A

- Ensures probabilities are always positive or zero, never negative

- Reflects the intuitive notion that probabilities represent relative frequencies or degrees of belief

- Applies to both frequentist and Bayesian interpretations of probability

Unity axiom

- Specifies that the probability of the entire sample space is equal to 1

- Mathematically expressed as , where Ω is the sample space

- Ensures that all possible outcomes are accounted for

- Implies that probabilities are normalized and sum to 1 across all mutually exclusive and exhaustive events

- Crucial for proper scaling of probability distributions in Bayesian analysis

Additivity axiom

- States that for mutually exclusive events, the probability of their union equals the sum of their individual probabilities

- Mathematically expressed as for mutually exclusive events A and B

- Generalizes to countably infinite sets of mutually exclusive events

- Allows for calculation of probabilities for complex events by breaking them down into simpler components

- Forms the basis for many probability calculations in Bayesian inference

Properties of probability

- Probability properties derive from the fundamental axioms and provide useful tools for calculations

- These properties play a crucial role in simplifying complex probability problems in Bayesian analysis

- Understanding these properties helps in developing intuition about probabilistic reasoning

Complement rule

- States that the probability of an event's complement equals 1 minus the probability of the event

- Mathematically expressed as

- Useful for calculating probabilities of events that are difficult to compute directly

- Applies to both simple and compound events

- Frequently used in Bayesian hypothesis testing to calculate probabilities of alternative hypotheses

Inclusion-exclusion principle

- Provides a method for calculating the probability of the union of multiple events

- For two events:

- Generalizes to n events, accounting for overlaps between event sets

- Crucial for handling non-mutually exclusive events in probability calculations

- Applies to both discrete and continuous probability distributions

Monotonicity of probability

- States that if event A is a subset of event B, then P(A) ≤ P(B)

- Reflects the intuitive notion that a more inclusive event has a higher or equal probability

- Mathematically expressed as: If A ⊆ B, then P(A) ≤ P(B)

- Useful for bounding probabilities and making comparisons between events

- Helps in developing probabilistic inequalities and concentration bounds in Bayesian analysis

Conditional probability

- Conditional probability forms the foundation for updating beliefs in Bayesian inference

- Represents the probability of an event occurring given that another event has already occurred

- Crucial for understanding how new information affects probability estimates in Bayesian analysis

Definition and notation

- Conditional probability of A given B denoted as P(A|B)

- Mathematically defined as , where P(B) > 0

- Represents the updated probability of A after observing B

- Can be visualized using Venn diagrams or tree diagrams

- Forms the basis for calculating likelihood in Bayesian inference

Bayes' theorem connection

- Bayes' theorem derived from the definition of conditional probability

- Expressed as

- Allows for reversing the direction of conditioning

- Central to Bayesian inference, updating prior probabilities with new evidence

- Provides a framework for combining prior knowledge with observed data in Bayesian analysis

Independence of events

- Independence is a crucial concept in probability theory and Bayesian statistics

- Events are independent if the occurrence of one does not affect the probability of the other

- Understanding independence helps in simplifying complex probability calculations and modeling assumptions

Definition of independence

- Two events A and B are independent if P(A ∩ B) = P(A) * P(B)

- Equivalent definition: P(A|B) = P(A) and P(B|A) = P(B)

- Independence implies that knowing one event provides no information about the other

- Crucial for simplifying probability calculations in many statistical models

- Often an assumption in Bayesian models, but should be carefully justified

Mutual independence vs pairwise

- Pairwise independence occurs when each pair of events in a set is independent

- Mutual independence requires independence among all possible subsets of events

- Mutual independence is a stronger condition than pairwise independence

- Mathematically, for mutually independent events: P(A1 ∩ A2 ∩ ... ∩ An) = P(A1) * P(A2) * ... * P(An)

- Distinction important in complex probability problems and when modeling multiple variables in Bayesian networks

Probability distributions

- Probability distributions describe the likelihood of different outcomes in a random experiment

- Central to modeling uncertainty in Bayesian statistics

- Understanding different types of distributions is crucial for selecting appropriate models in Bayesian analysis

Discrete vs continuous distributions

- Discrete distributions deal with countable outcomes (coin flips, dice rolls)

- Continuous distributions deal with uncountable outcomes (height, weight, time)

- Discrete distributions use probability mass functions (PMF)

- Continuous distributions use probability density functions (PDF)

- Both types play important roles in Bayesian modeling, depending on the nature of the data

Cumulative distribution functions

- Cumulative distribution function (CDF) gives the probability of a random variable being less than or equal to a given value

- Defined for both discrete and continuous distributions

- For a random variable X, CDF is F(x) = P(X ≤ x)

- Properties include monotonicity and limits (F(-∞) = 0, F(∞) = 1)

- Useful for calculating probabilities of ranges and quantiles in Bayesian analysis

Probability calculations

- Probability calculations form the core of statistical inference in Bayesian analysis

- Mastering these calculations is essential for applying Bayesian methods to real-world problems

- Understanding how to combine and manipulate probabilities is crucial for deriving posterior distributions

Simple probability problems

- Involve basic applications of probability axioms and properties

- Include calculating probabilities of single events or simple combinations of events

- Often use techniques like the complement rule or addition rule for mutually exclusive events

- Provide foundation for understanding more complex probabilistic reasoning

- Examples include calculating probabilities for coin flips, die rolls, or card draws

Compound probability problems

- Involve multiple events or conditions combined in various ways

- Require application of conditional probability, independence, and other advanced concepts

- Often use techniques like the multiplication rule for independent events or Bayes' theorem

- Critical for modeling complex scenarios in Bayesian analysis

- Examples include calculating probabilities in multi-stage experiments or updating probabilities based on new information

Axioms in Bayesian context

- Probability axioms provide the foundation for Bayesian inference and decision-making

- Understanding how these axioms apply in a Bayesian context is crucial for proper interpretation of results

- Bayesian approach treats probability as a measure of belief, which can be updated with new evidence

Prior probability considerations

- Prior probabilities represent initial beliefs before observing data

- Must satisfy probability axioms (non-negativity, unity, additivity)

- Can be informed by previous studies, expert knowledge, or theoretical considerations

- Choice of prior can significantly impact posterior inference, especially with limited data

- Improper priors (do not integrate to 1) sometimes used but require careful justification

Posterior probability implications

- Posterior probabilities result from updating prior beliefs with observed data

- Must also satisfy probability axioms, ensuring coherent inference

- Calculated using Bayes' theorem, combining prior probabilities with likelihood of data

- Represent updated beliefs after incorporating new evidence

- Form the basis for Bayesian decision-making and further inference

Limitations and extensions

- Understanding the limitations of basic probability theory is crucial for advanced Bayesian modeling

- Extensions to probability theory allow for handling more complex scenarios in Bayesian statistics

- Awareness of these concepts helps in choosing appropriate models and interpreting results correctly

Finite vs infinite sample spaces

- Finite sample spaces contain a countable number of outcomes

- Infinite sample spaces can be countably infinite or uncountably infinite

- Probability calculations for finite spaces often simpler and more intuitive

- Infinite spaces require more advanced mathematical techniques (measure theory)

- Many real-world Bayesian applications involve infinite sample spaces (continuous variables)

Measure theory introduction

- Measure theory provides a rigorous foundation for probability theory in infinite sample spaces

- Introduces concepts like σ-algebras and probability measures

- Allows for consistent definition of probabilities on arbitrary sets

- Crucial for advanced topics in Bayesian statistics (stochastic processes, continuous-time models)

- Bridges the gap between elementary probability theory and more advanced statistical concepts