The Central Limit Theorem (CLT) says that when your sample size is large enough, the sampling distribution of the sample mean is approximately normal, no matter what shape the population has. A common rule of thumb is , and you also need the sample values to be independent.

CLT AP Stats

In AP Stats, the Central Limit Theorem says the sampling distribution of the sample mean is approximately normal when the sample values are independent and the sample size is sufficiently large. The usual AP rule of thumb is n greater than or equal to 30, especially when the population distribution is not already normal.

Use the CLT when a question asks about a sample mean or average and you need to justify a normal model. The CLT does not make the population normal and it does not apply to individual observations; it applies to the distribution of sample means.

Why This Matters for the AP Statistics Exam

The CLT is the bridge that makes inference for means possible. Once you know the sampling distribution of x̄ is approximately normal, you can find probabilities, standardize with z-scores, and later build confidence intervals and run tests for means in Units 6 and 7. On free-response questions involving averages, you often have to justify why a normal model is reasonable, and the CLT is usually how you do that. Recognizing when a normal model applies, and saying why, is exactly the kind of reasoning the exam rewards.

Key Takeaways

- A sampling distribution of a statistic is the distribution of that statistic across all possible samples of a given size from a population.

- The CLT says that for a large enough sample size, the sampling distribution of the sample mean is approximately normal, even if the population is not.

- The two conditions to lean on are independence of the sample values and a sufficiently large n (often n greater than or equal to 30 as a rule of thumb).

- The bigger the sample size, the less spread in the sampling distribution, so sample means cluster closer to the true population mean.

- You can estimate a sampling distribution by simulation, taking many random samples and recording the statistic each time.

- When a question is about a mean or average, check whether the CLT lets you treat the sampling distribution as approximately normal before doing normal calculations.

What the Central Limit Theorem Actually Says

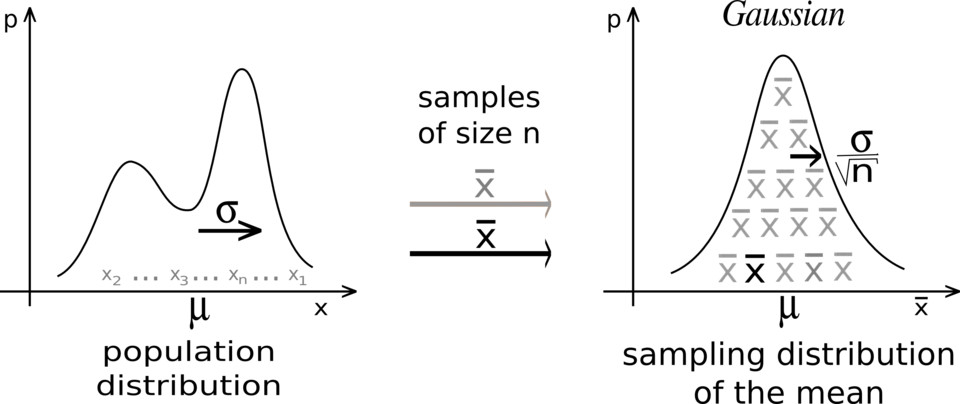

A sampling distribution of a statistic is the distribution of values for that statistic across all possible samples of a given size from a population. So the sampling distribution of the sample mean is what you would get if you took every possible sample of size n, calculated x̄ for each, and graphed those means.

The Central Limit Theorem states that when the sample size is sufficiently large, the sampling distribution of the sample mean of a random variable is approximately normal, regardless of the shape of the population distribution. In general, n greater than or equal to 30 is treated as large enough for the CLT to apply.

For the CLT to hold, two things matter:

- The sample values are independent of each other.

- The sample size n is sufficiently large.

If the population itself is already normal, then the sampling distribution of x̄ is normal for any sample size. The CLT is what saves you when the population is skewed or not normal, because a large enough n still gives you an approximately normal sampling distribution.

Bigger Samples, Tighter Distributions

With sampling distributions, a larger sample size produces less spread. The larger the sample, the more the sampling distribution centers tightly around the true population parameter.

Think about flipping a coin. If you flip it 6 times, the proportion of heads can easily be far from 0.5 because the result swings around a lot. But once you flip 50, 100, or 1000 times, the proportion of heads is very unlikely to be far from 0.5, the true value. Larger samples hone in on the population parameter, which is exactly what you want when working with a sampling distribution.

The image below shows how the sampling distribution becomes more normal and less spread as the sample size grows.

Estimating Sampling Distributions with Simulation

You do not always need a formula to see a sampling distribution. You can simulate one by generating many random samples from a population and recording the statistic for each sample. Graphing all those statistics gives you an estimate of the sampling distribution.

A related idea is a randomization distribution, which is a collection of statistics generated by simulation while assuming known values for the parameters. For a randomized experiment, this means repeatedly reassigning the response values to treatment groups and recording the statistic each time. Simulations like these help you see the patterns the CLT predicts without doing heavy math.

How to Use This on the AP Statistics Exam

Free Response

When a question asks about the probability of a mean or average, do not assume normality automatically. Use the CLT to justify it.

For example, suppose a question asks for the probability about the mean size of fish in a pond and tells you the sample size is 40. Your reasoning would be that since n equals 40, which is greater than 30, the sampling distribution of the sample mean is approximately normal by the Central Limit Theorem. You should also state that the sample values are independent.

State your normal-model justification clearly. If you are using the CLT, say so and point to the large sample size. If the problem tells you the population is normal, you can cite that instead and skip the n greater than or equal to 30 reasoning.

Problem Solving

Once you have justified a normal model, you can standardize a sample mean and find probabilities using a z-score of the form

z = (x̄ − μ)/(σ/√n)

The standard deviation of the sampling distribution, σ/√n, shrinks as n grows, which is the math behind tighter distributions for larger samples. Keep your units and context attached to any probability you report.

Common Trap

The CLT is about the sampling distribution of the mean, not about a single observation and not about the population shape changing. A large sample does not make the original data normal. It makes the distribution of sample means approximately normal.

Common Misconceptions

- The CLT does not say the population becomes normal. It says the sampling distribution of the sample mean becomes approximately normal as n grows.

- A large sample does not make individual data values normal. Only the distribution of the sample mean is affected.

- n greater than or equal to 30 is a common rule of thumb, not a magic cutoff. Strongly skewed populations may need larger samples, and a population that is already normal needs no minimum.

- You still need independence. A large n alone is not enough if the sample values are not independent.

- The CLT does not eliminate variability. It describes the predictable shape and spread of sample means, but sample results still vary from sample to sample.

Related AP Statistics Guides

Vocabulary

The following words are mentioned explicitly in the College Board Course and Exam Description for this topic.Term | Definition |

|---|---|

approximately normal | A distribution that closely follows the shape of a normal distribution, allowing for the use of normal probability methods. |

central limit theorem | A theorem stating that when the sample size is sufficiently large, the sampling distribution of the mean of a random variable will be approximately normally distributed. |

independence | The condition that observations in a sample are not influenced by each other, typically ensured through random sampling or randomized experiments. |

null distribution | The probability distribution of the test statistic under the assumption that the null hypothesis is true. |

random sample | A sample selected from a population in such a way that every member has an equal chance of being chosen, reducing bias and allowing for valid statistical inference. |

sample size | The number of observations or data points collected in a sample, denoted as n. |

sampling distribution | The probability distribution of a sample statistic (such as a sample proportion) obtained from repeated sampling of a population. |

simulation | A method of modeling random events so that simulated outcomes closely match real-world outcomes, used to estimate probabilities. |

statistic | Numerical summaries or measures calculated from sample data, such as mean, median, or standard deviation. |

Frequently Asked Questions

What is the CLT in AP Stats?

The Central Limit Theorem says that when sample values are independent and the sample size is sufficiently large, the sampling distribution of the sample mean is approximately normal.

When can I use the Central Limit Theorem in AP Statistics?

Use the CLT for sample means when the observations are independent and n is large enough. On AP Stats, n greater than or equal to 30 is a common rule of thumb for non-normal populations.

Does the CLT make the population normal?

No. The CLT does not change the population distribution or individual data values. It describes the shape of the sampling distribution of sample means.

What conditions do I need for the CLT?

You need independent sample values and a sufficiently large sample size. If the population is already normal, the sampling distribution of the mean can be normal even for smaller samples.

What formula goes with the Central Limit Theorem?

After justifying a normal model, sample means are often standardized with z = (x̄ - μ)/(σ/√n). The standard deviation of the sampling distribution is σ/√n.

How should I write a CLT justification on an FRQ?

State that the sampling distribution of x̄ is approximately normal because the sample values are independent and the sample size is large enough, then connect that to the context of the problem.