Factor analysis is a powerful statistical tool used in communication research to uncover hidden patterns in complex datasets. It reduces numerous variables into a smaller set of factors, revealing underlying constructs that shape communication processes and outcomes.

This method aids researchers in developing and validating theories, creating measurement scales, and exploring media effects. By simplifying data while retaining essential information, factor analysis enhances our understanding of communication phenomena and supports evidence-based research in the field.

Overview of factor analysis

- Factor analysis serves as a statistical method in Advanced Communication Research Methods to uncover underlying patterns in large datasets

- Reduces complex data into a smaller set of factors, enabling researchers to identify latent constructs in communication studies

- Facilitates theory development and validation in communication research by revealing relationships between observed variables

Types of factor analysis

Exploratory factor analysis

- Uncovers underlying factor structure without preconceived notions about variable relationships

- Identifies patterns in data to generate hypotheses about latent constructs

- Commonly used in initial stages of scale development for communication measures

- Employs methods like principal component analysis or maximum likelihood estimation

Confirmatory factor analysis

- Tests specific hypotheses about factor structure based on existing theory or prior research

- Evaluates how well observed data fits a predetermined factor model

- Assesses construct validity of communication measures and theories

- Utilizes structural equation modeling techniques to confirm factor structures

Purpose and applications

Data reduction techniques

- Condenses large sets of variables into a smaller number of meaningful factors

- Identifies redundant or highly correlated variables in communication datasets

- Simplifies complex datasets while retaining essential information

- Enhances interpretability of results in communication research studies

Construct validation

- Assesses whether measurement items accurately represent theoretical constructs

- Evaluates convergent and discriminant validity of communication scales

- Refines existing measures by identifying items that do not fit the intended construct

- Supports development of new theories in communication research

Key concepts in factor analysis

Factors vs variables

- Factors represent underlying constructs that explain patterns in observed variables

- Variables are directly measured items or indicators in a dataset

- Factors are latent, unobserved constructs inferred from correlations among variables

- Number of factors typically smaller than number of original variables

Factor loadings

- Indicate strength of relationship between each variable and the underlying factor

- Range from -1 to +1, with higher absolute values indicating stronger associations

- Loadings above 0.4 or 0.5 generally considered significant in communication research

- Used to interpret meaning of factors and assign variables to factors

Communalities

- Represent proportion of variance in a variable explained by all extracted factors

- Range from 0 to 1, with higher values indicating better explanation by factors

- Low communalities suggest a variable may not fit well with other variables in the factor structure

- Guide decisions about variable retention or removal in scale development

Steps in factor analysis

Data preparation

- Assess sample size adequacy (typically 5-10 participants per variable)

- Screen for missing data and outliers in communication datasets

- Check for multivariate normality and linearity assumptions

- Standardize variables if measured on different scales

Factor extraction methods

- Principal Component Analysis (PCA) identifies linear combinations of variables

- Maximum Likelihood Estimation (MLE) assumes multivariate normal distribution

- Principal Axis Factoring (PAF) focuses on shared variance among variables

- Determine number of factors to extract using scree plots or parallel analysis

Factor rotation techniques

- Orthogonal rotation (varimax) produces uncorrelated factors

- Oblique rotation (promax, direct oblimin) allows factors to correlate

- Improves interpretability of factor structure by maximizing high loadings and minimizing low loadings

- Choose rotation method based on theoretical expectations about factor relationships

Interpreting factor analysis results

Factor structure

- Examine pattern matrix to identify variables with high loadings on each factor

- Look for simple structure where variables load strongly on one factor

- Interpret meaning of factors based on common themes among high-loading variables

- Consider cross-loadings and their implications for factor interpretation

Variance explained

- Total variance explained indicates overall effectiveness of factor solution

- Cumulative percentage of variance explained by extracted factors

- Eigenvalues represent amount of variance explained by each factor

- Higher variance explained suggests better representation of original data

Factor scores

- Estimated values of latent factors for each observation in the dataset

- Used in subsequent analyses as composite variables representing constructs

- Calculated using regression, Bartlett, or Anderson-Rubin methods

- Enable examination of relationships between factors and other variables

Assumptions and limitations

Sample size considerations

- Larger sample sizes produce more stable and reliable factor solutions

- Rule of thumb: minimum 300 cases or 10 cases per variable

- Inadequate sample size can lead to overfitting or failure to detect weak factors

- Conduct power analysis to determine appropriate sample size for factor analysis

Multicollinearity issues

- High correlations between variables can lead to unstable factor solutions

- Check for multicollinearity using correlation matrix or variance inflation factors

- Address multicollinearity by removing redundant variables or combining highly correlated items

- Consider theoretical implications of removing variables in communication research

Factor analysis in communication research

Scale development applications

- Creates and validates measurement instruments for communication constructs

- Identifies underlying dimensions in multi-item scales (media literacy, interpersonal communication competence)

- Refines existing scales by removing poorly performing items or identifying subscales

- Ensures construct validity and reliability of measures used in communication studies

Media effects studies

- Uncovers latent factors in media exposure or media use patterns

- Identifies underlying dimensions of audience engagement or media gratifications

- Explores factor structure of perceived message effectiveness in health communication campaigns

- Examines factor structure of attitudes towards different media platforms or content types

Software for factor analysis

SPSS vs R vs SAS

- SPSS offers user-friendly interface and comprehensive factor analysis procedures

- R provides flexibility and advanced techniques through packages like

psychandlavaan - SAS offers robust factor analysis capabilities with extensive customization options

- Choice depends on researcher's statistical expertise and specific analysis requirements

Reporting factor analysis results

Tables and figures

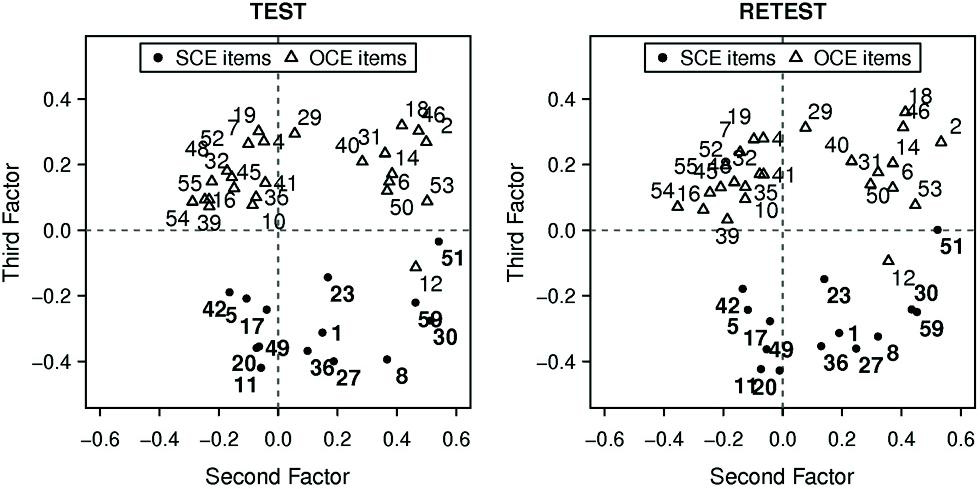

- Present factor loadings in a rotated factor matrix table

- Include scree plot to justify number of factors extracted

- Report communalities and variance explained for each factor

- Provide path diagrams for confirmatory factor analysis results

APA style guidelines

- Report factor analysis method, rotation technique, and extraction criteria

- Include sample size, number of variables, and factors extracted

- Present factor loadings, communalities, and variance explained

- Describe factor interpretation and implications for communication theory

Advanced factor analysis techniques

Structural equation modeling

- Combines factor analysis with path analysis to test complex theoretical models

- Allows simultaneous estimation of measurement and structural relationships

- Assesses model fit using indices like CFI, RMSEA, and SRMR

- Enables testing of mediation and moderation effects in communication processes

Multidimensional scaling

- Visualizes similarities or dissimilarities between objects in a low-dimensional space

- Complements factor analysis by providing spatial representation of construct relationships

- Useful for exploring perceptions of communication concepts or media content

- Helps identify underlying dimensions in complex communication phenomena